Attorney Broflowski principle, or do-it-yourself cloud load balancing

Each is represented by Gerald Broflovski, a lawyer from South Park, who expects to receive a good commission. The opposite side of each is ... Gerald Broflovski! It seems that Kyle’s dad is waiting for sickly babos regardless of the outcome of the case.

South Park, Series 306, “Panda — Sexual Harassment”

Smug Alert!

Not so long ago, our colleagues spoke at the HighLoad ++ conference about solving the problem of load balancing in Google and Amazon clouds, as well as DNS balancing using the gdnsd service. This is a great introduction to the topic, which is to meet all those whom life has already forced to start a few frontends. And a practical guide if you are forced to deal with cloud hosting.

Fortunately, there are times when you can enjoy the pleasure of working with real equipment, and not with clouds. The author of these lines loves real equipment and is ready to talk for hours about its advantages:

- You are not robbed on traffic. Paygigabyte traffic payment is a favorite source of income for cloud systems spoiled by the attention of startups. The life of the “iron” providers takes place in the fiercest competition for a more stingy consumer, and it is normal for them to offer a large packet of traffic for free. There are also “packaged” clouds, such as Linode, however you will be limited in them by the power of the instances offered and, most likely, you will sadly find that in order to build a little serious service you need them in large quantities - and thus will charge you for traffic in another way.

') - You can predictably buy more servers. For example, the AWS limit description takes up three dozen A4 pages . Suddenly bumping into each limit, you will prove to Amazon the need to increase it, and Amazon will decide if you are worthy of it. Petty small customers are treated for at least 3 days, more often - a week. In the meantime, the best dedicated providers provide iron immediately or the next day, they are concerned only with your accuracy in paying bills and law-abiding.

- Life on hardware is forcing you to use proven open source, not proprietary services with mysterious properties that you cannot change if they are bad, or repair if they are broken. This problem is well disclosed in the last two sections of this report.

- Some things in general normally work only on carefully selected equipment. A good example is our favorite Aerospike DBMS. The number of problems with it is directly proportional to the age of SSD disks, so we not only require real iron SSDs, but also completely new ones. The transition to NVMe interfaces, where it is possible, raised our aerospay industry to the exosphere, reducing the load fivefold. Aerospike users in the clouds in this place can only smile sadly and go to distort the cluster, which again crumbled.

Many dedicated providers have learned how to integrate servers into virtual networks, and the expansion of farms in them is not a problem. A popular exception is Hetzner, where you still need to reserve units and plan a place, but I hope that it will also be delivered by smart switching. But with balancing things are worse.

Where it makes sense to discuss balancing with a provider, you will be offered a modern descendant of something like this:

Iron is a piece, it will cost, of course, offensive money, and for configuring require special knowledge.

And now it's time to remember that ...

Chef, what do we have for lunch today?

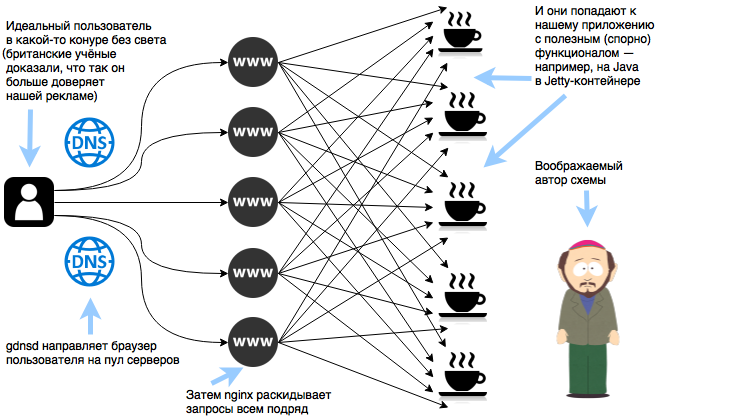

Your farms are probably already working using one of the automation tools, and this means that massive changes are cheap. And as a HTTP frontend, nginx is most likely used on the same servers as the application serves. This makes it easy to build a “every with each” balancing scheme - every server will be a balancer, and it will want the application that responds to the request.

Configure the server pool in gdnsd:

; , ; IP- service_types => { adserver => { plugin => extmon, cmd => ["/usr/local/bin/check-adserver-node-for-gdnsd", "%%IPADDR%%"] down_thresh => 4, ; 4 ? . ok_thresh => 1, ; ? ! interval => 20, ; 20 timeout => 5, ; 5 } ; adserver } ; service_types plugins => { multifo => { ; 1/3 , DNS ; , - — , ; , «» ; up_thresh => 0.3, adserver-eu => { service_types => adserver, www1-de => 192.168.93.1, www2-de => 192.168.93.2, www3-de => 192.168.93.3, www4-de => 192.168.93.4, www5-de => 192.168.93.5, } ; adserver-eu } ; multifo } ; plugins We use this configuration in the DNS zone file:

eu.adserver.sample. 60 DYNA multifo!adserver-eu Make a server pool for nginx and roll out the configuration across the farm:

upstream adserver-eu { server localhost:8080 max_fails=5 fail_timeout=5s; server www1-de.adserver.sample:8080 max_fails=5 fail_timeout=5s; server www2-de.adserver.sample:8080 max_fails=5 fail_timeout=5s; server www3-de.adserver.sample:8080 max_fails=5 fail_timeout=5s; server www4-de.adserver.sample:8080 max_fails=5 fail_timeout=5s; server www5-de.adserver.sample:8080 max_fails=5 fail_timeout=5s; keepalive 1000; } If there are more than 25 servers in the rotation, do not forget to divide them into groups of 25 under a single name and dynamically select the synonyms (DYNC) in gdnsd instead of the A-records.

The list of servers, if desired, can be filled automatically according to gdnsd or the automation system. For example, we use puppet in our company, and a special cron script keeps up to date lists of servers of various types registered on the Puppmaster. The string with localhost protects against the situation when the list is empty due to an error - in this case, each nginx will serve only its own frontend and a catastrophe will not occur.

We light, boys, we light!

So, what happened:

- Excellent failover. The risks are reduced to the network (this is solved by distributing the application across several regions with independent power supply and network) and configuration (no one bothers you to change the configuration in nginx or gdnsd, which will quickly break everything - and the recipes to combat this are also known).

- The problem of "warming up" the pool and the problem of lack of capacity does not exist. Your web application is guaranteed to die first.

- Uniform traffic consumption and network load distribution. Take 5 Gbit / s traffic on 10 servers with gigabit? No problem, just remember to divert traffic between balancers and applications to the internal network, and also make sure that the provider does not sell you a switch with a 1 Gbit / s total uplink to the Internet on these 10 servers.

- "Smooth" Deploy. When using only DNS-balancing, we faced the fact that many of our partners (SSP) "tore the roof" the sequential loss of our servers from the pool. The situation was complicated by the fact that our Java applications load a lot of the data they need into memory during initialization and this takes time. Cross-balancing made the process almost invisible to the other side.

Insult of Mr. Prutik

You have to pay for free balancing, and the victim is sticky-sessions. However, the free version of nginx offers IP-based balancing , and modern NoSQL databases, such as Aerospike, can be used as a reliable shared session repository with fast response times that you can use in your applications. Finally, it is possible, although not unpatriotic, to switch to HAProxy, which can route users to multiple balancers according to a single rule . In general, when we need sticky sessions, we will fight for them with available funds.

Numbers

Now each of our 20-core servers processes up to 15,000 requests per second, which is close to the practical ceiling of our Java application. For all the clusters, we balance and process at the peak more than half a million requests per second, 5 Gbit / s of incoming traffic and 4 Gbit / s of outgoing traffic, with the exception of CDN. Yes, the specifics of the DSP is the excess of incoming over outgoing, auction bids are much more than meaningful answers to them.

The author of the article is igors

Source: https://habr.com/ru/post/329012/

All Articles