Centralized logging in MongoDB

From philosophy to materiel.

We are developing an ERP system optimized for high loads . As a result, clustering is present in the system. And each of the cluster nodes maintains its own log. The log contains error messages, various progress messages from application developers, and so on.

How we implemented journaling - under the cut.

To begin with, according to the precepts of the ancestors they walked around the rake.

We had

- Multiple clusters

- In each of which several nodes

- Crowd of developers

- Hundreds of client desktop applications.

- 20-30mb application and system code with trace and data dump.

All applications through NLog wrote to the files.

Logging to a file had only one advantage — it was very easy to administer, and that dubious one — log files also need to be rotated.

In all other respects it is a failure:

So, we needed a journaling solution that would make it easy to search for messages from various sources, easy to understand the message, save objects in an easily readable structured format, and lastly, the solution should be easy to install, maintain, be convenient.

After going through several options (Elasticsearch and other similar mechanisms), they stopped at a bunch of SeriLog and MongoDB .

SeriLog perfectly solved the problem of structured event storage. MongoDB is an easy-to-admin base, with enough popularity, a user-friendly interface and support for C #, is actively developing.

In MongoDB, the quality of documenting the driver for C # was somewhat upsetting, so I had to tinker in search of support for ad-hoc requests. However, it is not critical.

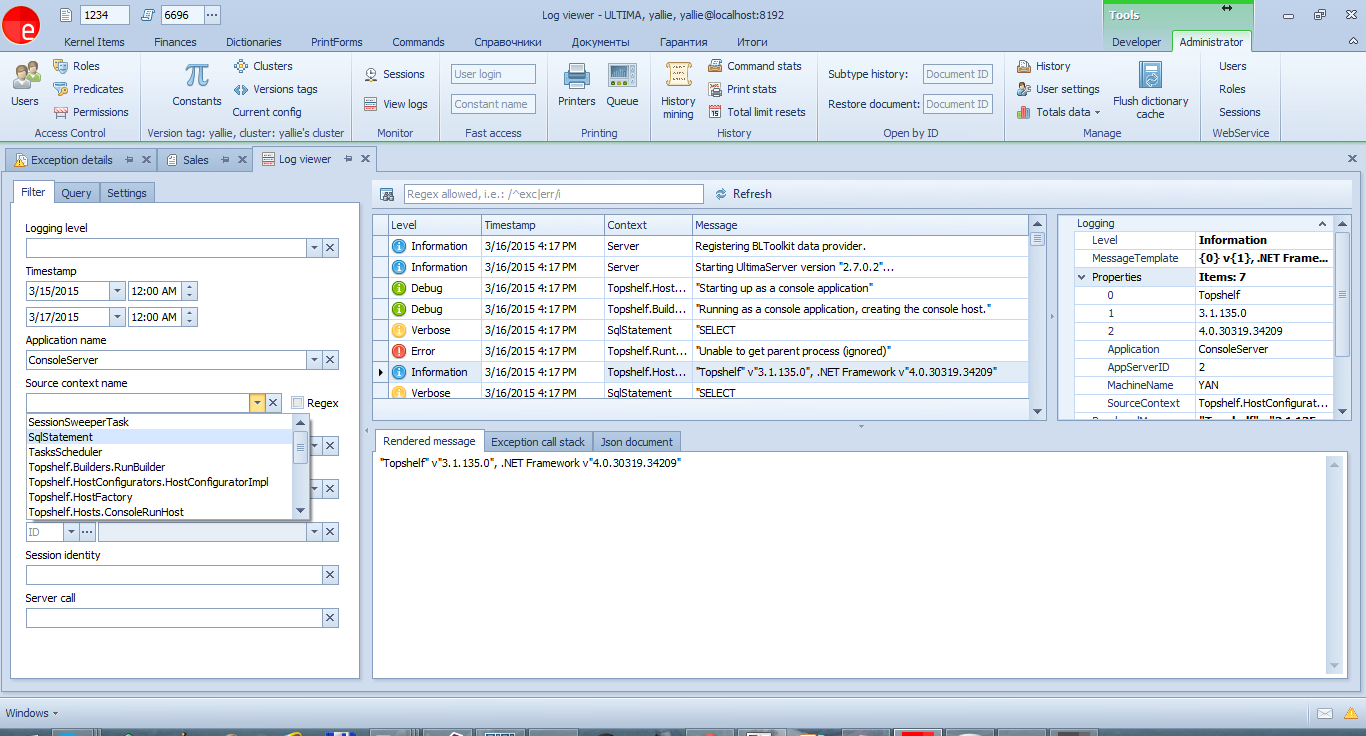

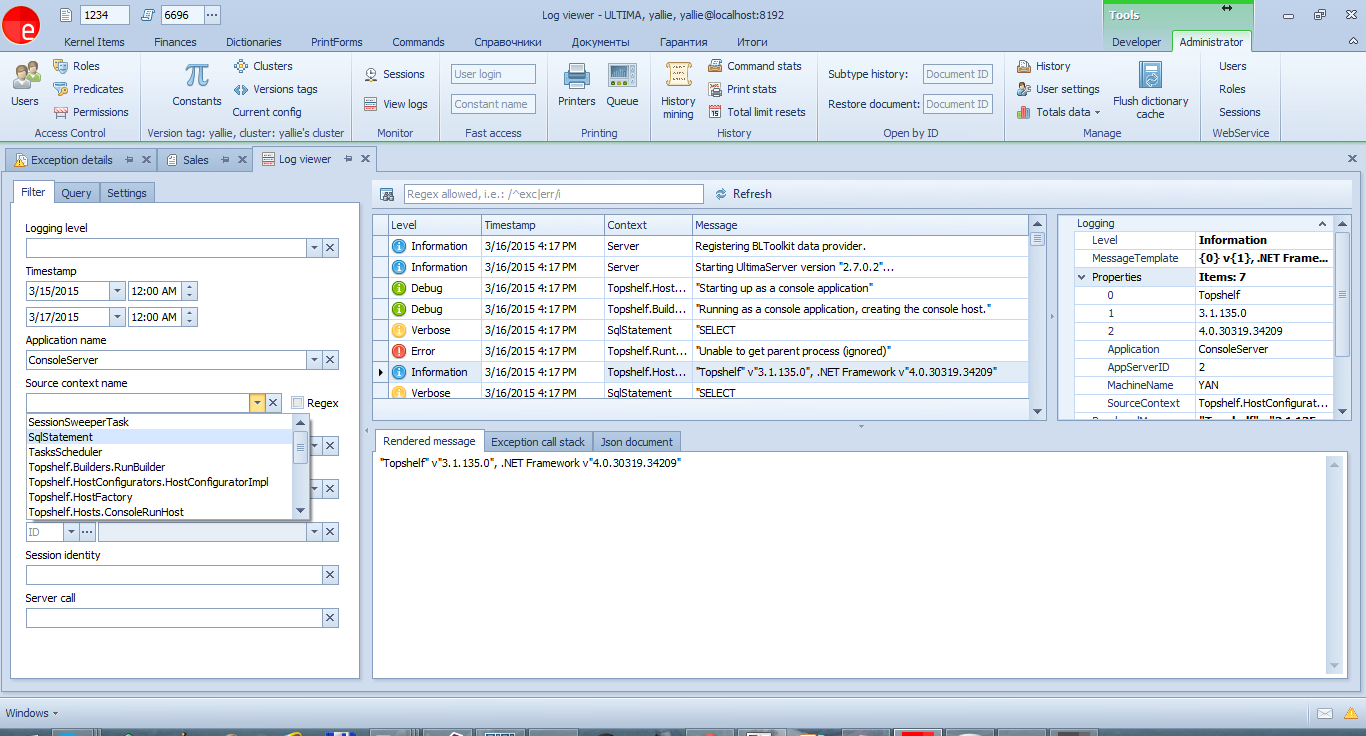

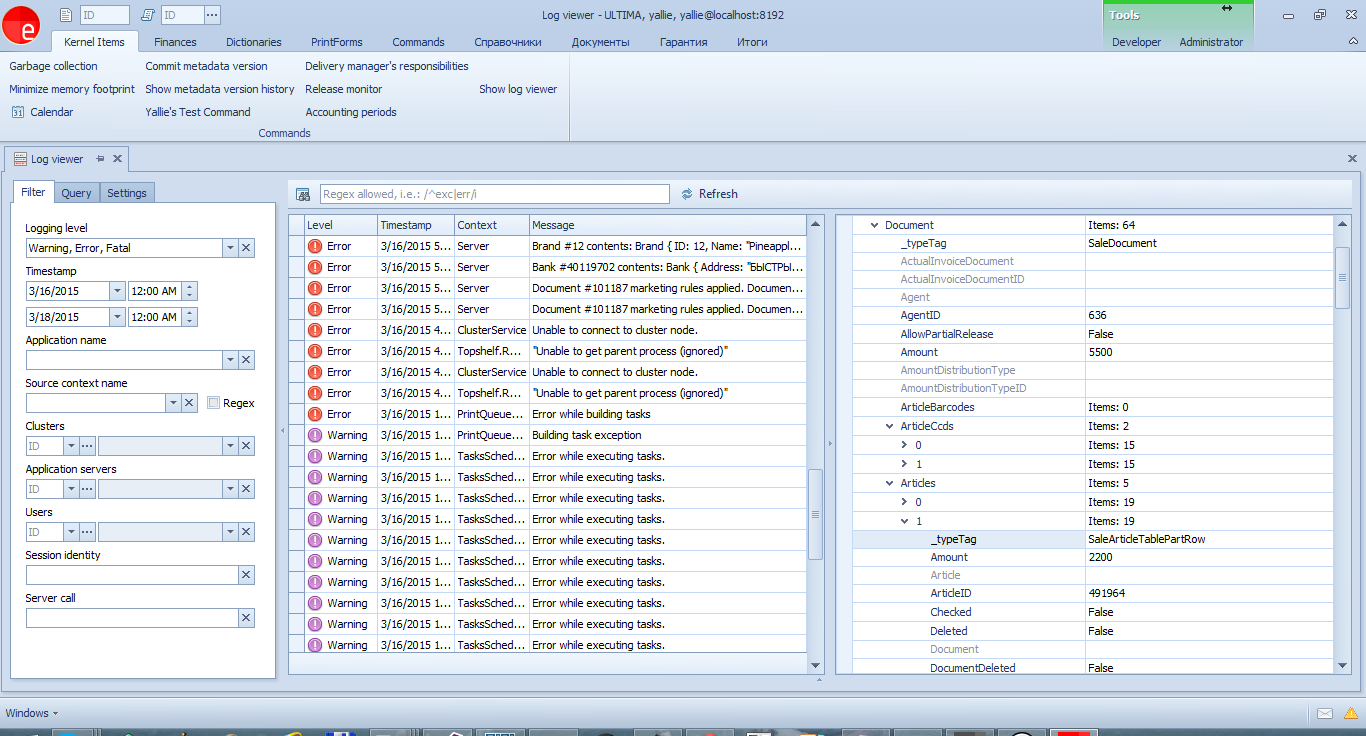

As a result, we received such an interface for viewing events, which is available to any developer:

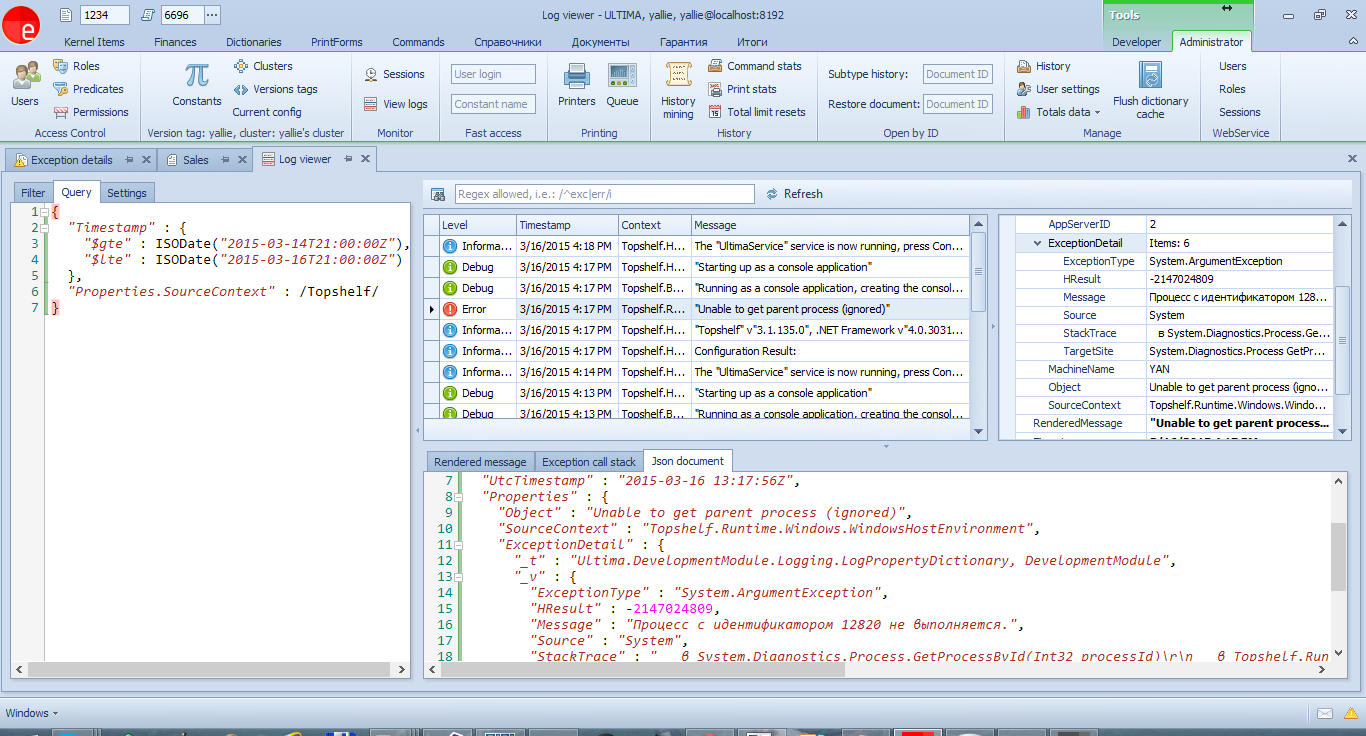

For simple cases, you can use the filter on the first tab. And on the second - a full query window, where you can write arbitrary queries.

A nice feature is that SeriLog saves the message template, which allows (having the program code before your eyes) to find all the relevant messages.

')

Well, SourceContext allows you to filter by message source - from a server, client, or SQL queries.

The latter is the most convenient means of tracking which SQL queries are executed to the database in order to optimize them.

A small digression.

In general, there are 2 tools for tracing SQL queries in our platform.

Let's go back to the above example:

In this case, the entire list of parameters will be attached to the journal event,

and in the log you can find a record about the execution of the command with a specific value

the desired parameter.

The example is somewhat contrived, since all SQL queries are already logged.

Here is a more real one:

And the type of entries in the log:

When logging the state of a document or other difficult object, it is better not to save its contents in the message:

The message will look like this:

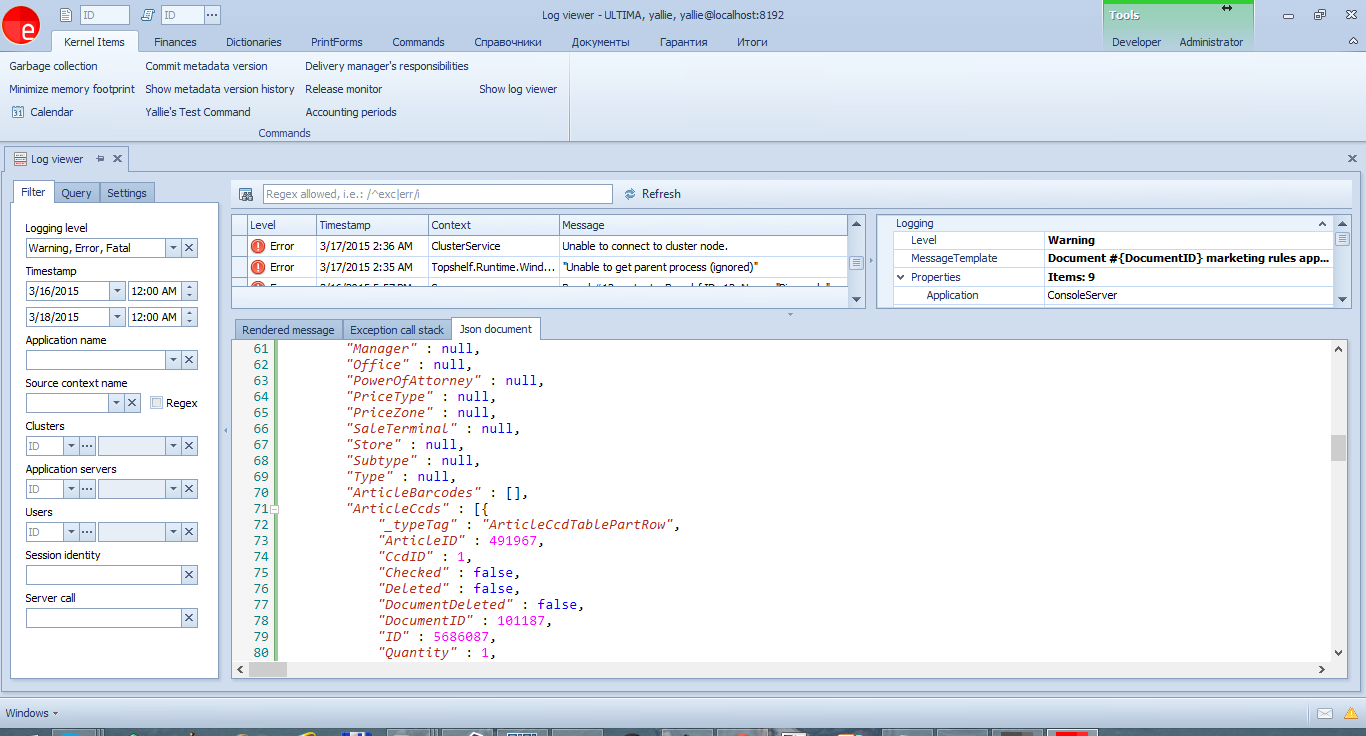

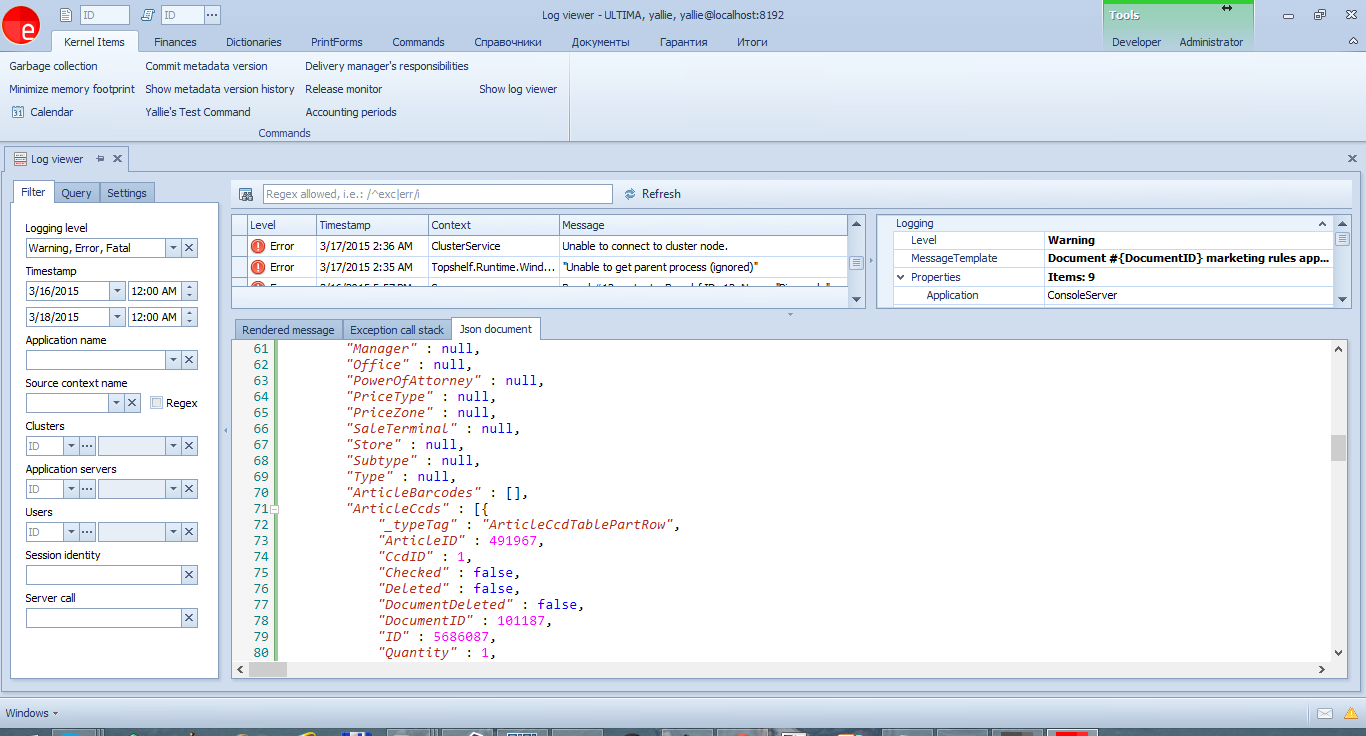

It would be more correct to display only the document number in the message, and pass the contents in the parameters. All parameters can be viewed in JSON:

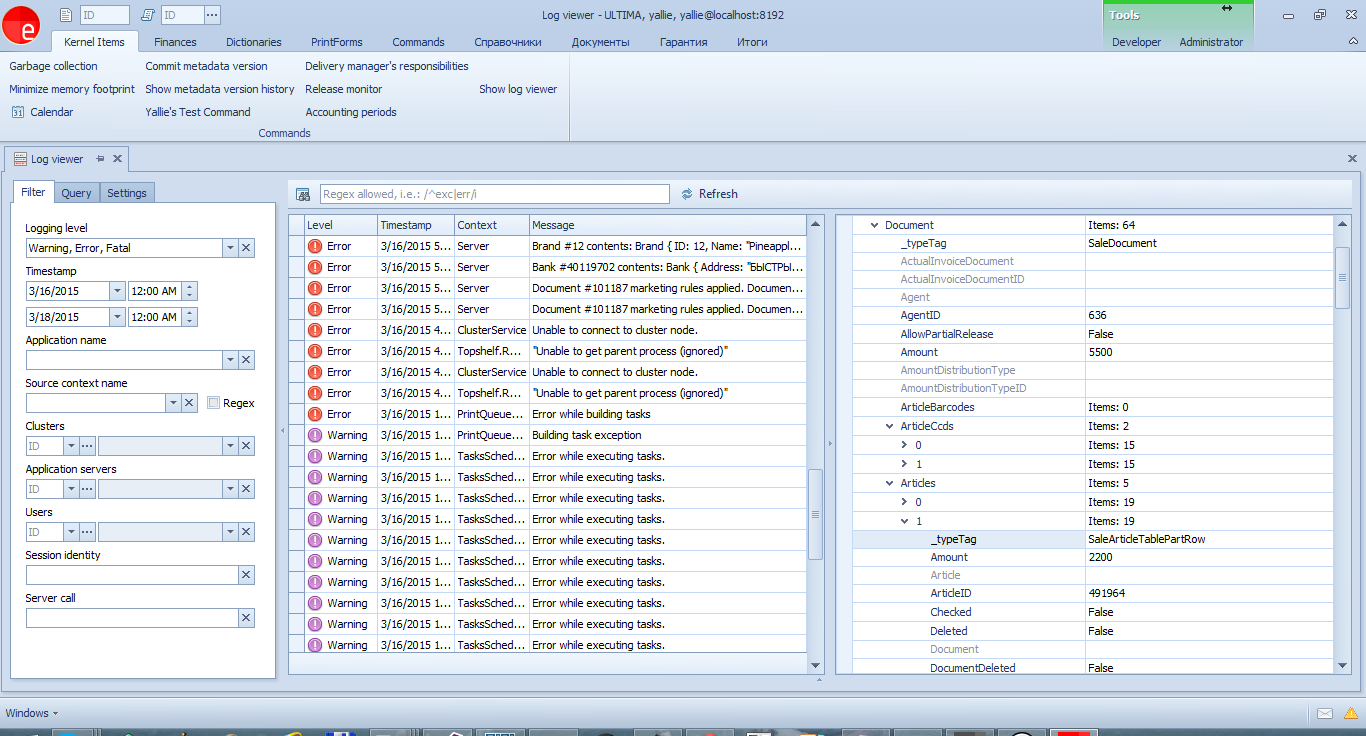

or in a structured form in a property grid:

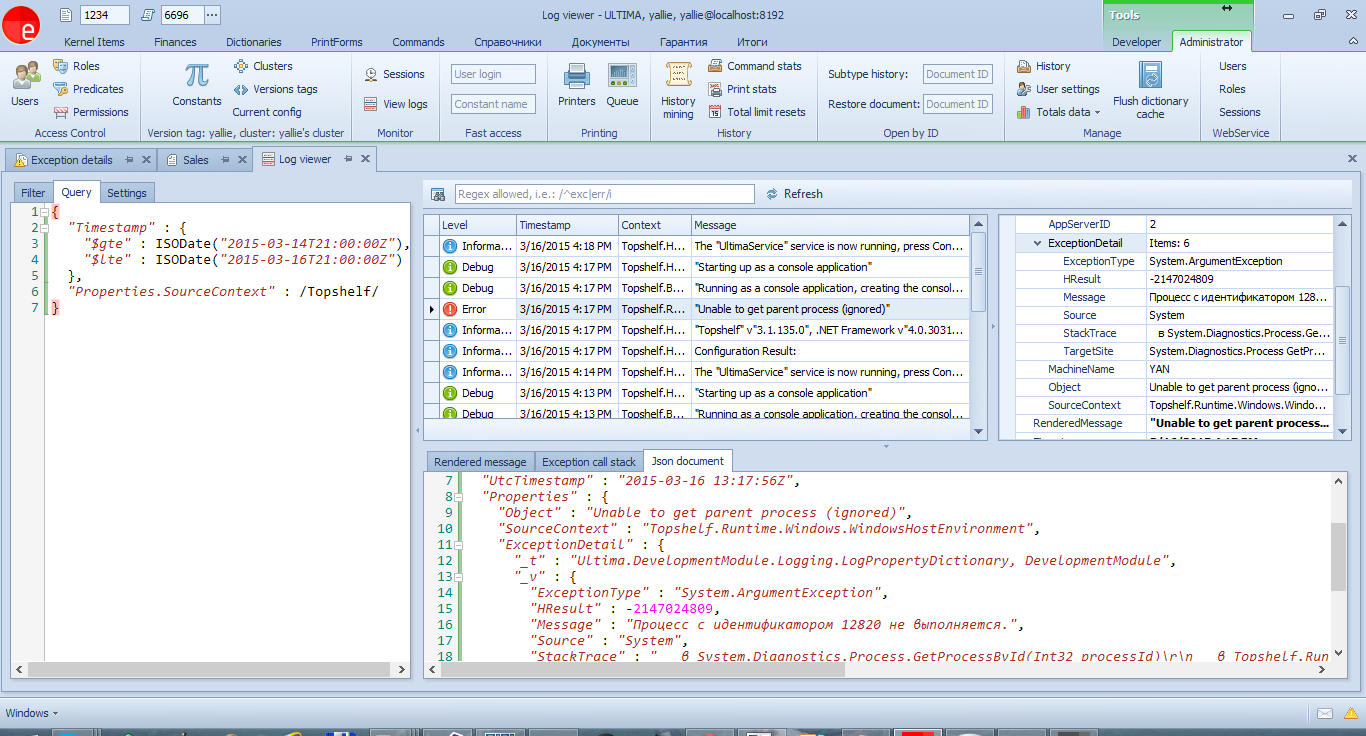

When exceptions are logged, their internal structure will be preserved, including

including the HResult, CallStack properties and the whole InnerException chain (unfortunately, this

the functionality is not built into Serilog, and it had to be implemented independently).

To log an exception, it is enough to pass it as the first parameter to the methods.

journaling:

The log analysis tool allows you to view the message structure in the PropertyGrid:

All unhandled exceptions will be logged automatically. By the way, all such incidents should be considered, and, if there really is a mistake, corrected. A good way to improve the quality of the application.

The described functionality is the best that we could choose for our tasks. Flexibility, ease of administration, ease of use, saving events with objects in a structured way.

I hope this article will help other developers of desktop applications to choose their own version of the implementation of journaling.

We are developing an ERP system optimized for high loads . As a result, clustering is present in the system. And each of the cluster nodes maintains its own log. The log contains error messages, various progress messages from application developers, and so on.

How we implemented journaling - under the cut.

To begin with, according to the precepts of the ancestors they walked around the rake.

We had

- Multiple clusters

- In each of which several nodes

- Crowd of developers

- Hundreds of client desktop applications.

- 20-30mb application and system code with trace and data dump.

All applications through NLog wrote to the files.

Logging to a file had only one advantage — it was very easy to administer, and that dubious one — log files also need to be rotated.

In all other respects it is a failure:

- Structured data

Often, to debug an application, you need to save an object. In addition to him, keep clarifying messages and other information. For example:var parameters = new Dictionary<string, object>(); parameters["id"] = id; parameters["limit"] = maxValues; ... Logger.Verbose("Executing command: {SQL} with parameters: {@Parameters}", sqlCommand, parameters); NLog , . - Availability of information

Logging in files forces log files on all servers to look for bits of information. Multiplied by the number of developers, this leads to the fact that everyone has access to all servers and becomes a permanent headache for administrators.

So, we needed a journaling solution that would make it easy to search for messages from various sources, easy to understand the message, save objects in an easily readable structured format, and lastly, the solution should be easy to install, maintain, be convenient.

After going through several options (Elasticsearch and other similar mechanisms), they stopped at a bunch of SeriLog and MongoDB .

SeriLog perfectly solved the problem of structured event storage. MongoDB is an easy-to-admin base, with enough popularity, a user-friendly interface and support for C #, is actively developing.

In MongoDB, the quality of documenting the driver for C # was somewhat upsetting, so I had to tinker in search of support for ad-hoc requests. However, it is not critical.

As a result, we received such an interface for viewing events, which is available to any developer:

For simple cases, you can use the filter on the first tab. And on the second - a full query window, where you can write arbitrary queries.

A nice feature is that SeriLog saves the message template, which allows (having the program code before your eyes) to find all the relevant messages.

')

Well, SourceContext allows you to filter by message source - from a server, client, or SQL queries.

The latter is the most convenient means of tracking which SQL queries are executed to the database in order to optimize them.

A small digression.

In general, there are 2 tools for tracing SQL queries in our platform.

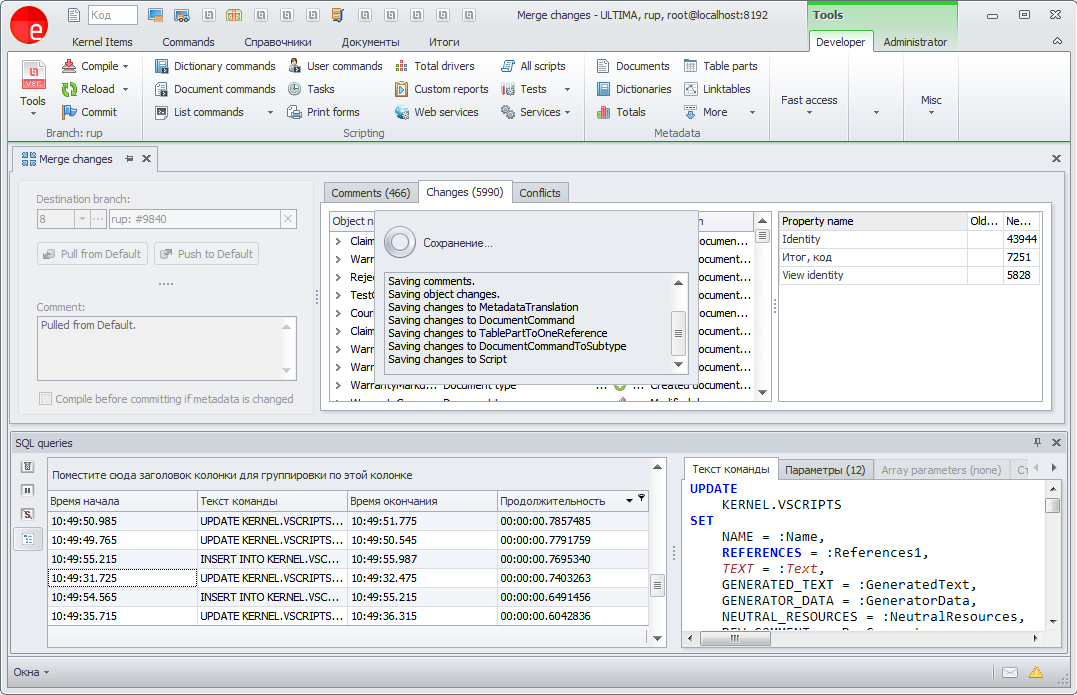

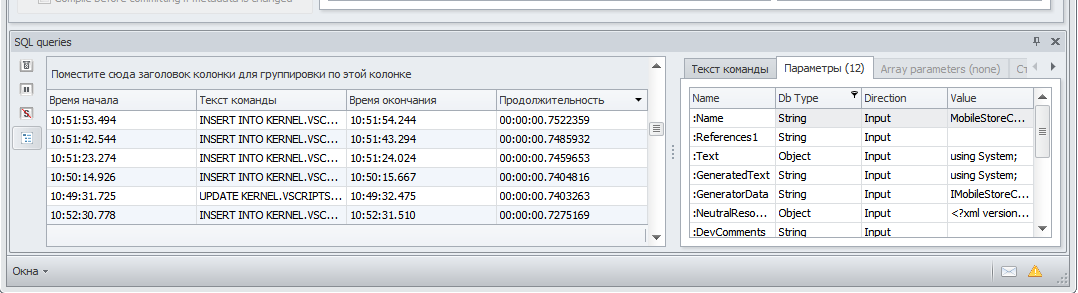

- Tracing Sessions Online

Messages will be received online in the corresponding window. You can connect to any session in the cluster.

Looks like that:

on the second tab, all parameters are listed in the table:

- Centralized log with all SQL queries along with parameters

All messages are available in the centralized log viewer in SourceContext SqlStatement

Let's go back to the above example:

var parameters = new Dictionary<string, object>(); parameters["id"] = id; parameters["limit"] = maxValues; ... Logger.Verbose("Executing command: {SQL} with parameters: {@Parameters}", sqlCommand, parameters); In this case, the entire list of parameters will be attached to the journal event,

and in the log you can find a record about the execution of the command with a specific value

the desired parameter.

The example is somewhat contrived, since all SQL queries are already logged.

Here is a more real one:

Logger.Debug("Brand #{BrandID} contents: {@Brand}", brand.ID, brand); And the type of entries in the log:

Brand #12 contents: Brand { ID: 12, Name: "Pineapple" } When logging the state of a document or other difficult object, it is better not to save its contents in the message:

Logger.Debug("Document #{DocumentID} is saved. Document contents: {@Document}", doc.ID, doc); .... The message will look like this:

Document #101187 is saved. Document contents: SaleDocument { ActualInvoiceDocumentID: null, AgentID: 636, AllowPartialRelease: False, Amount: 5500, AmountDistributionTypeID: null, ArticlesQuantity: 3, ClientDueOnDelivery: 5500, Comments: "noreply", Convertation: null, CreationDate: 02/06/2015 22:21:53, CreatorID: 7, CustomerSupplyContractID: null, DeadDate: 02/11/2015 22:21:53, Deleted: False, DeliveryActive: False, Description: "Sales (Picking) #101187, 2/6/2015" ... 50kb of JSON follows It would be more correct to display only the document number in the message, and pass the contents in the parameters. All parameters can be viewed in JSON:

or in a structured form in a property grid:

When exceptions are logged, their internal structure will be preserved, including

including the HResult, CallStack properties and the whole InnerException chain (unfortunately, this

the functionality is not built into Serilog, and it had to be implemented independently).

To log an exception, it is enough to pass it as the first parameter to the methods.

journaling:

Logger.Error(ex, "Cannot execute user task: {Message}", ex.Message); The log analysis tool allows you to view the message structure in the PropertyGrid:

All unhandled exceptions will be logged automatically. By the way, all such incidents should be considered, and, if there really is a mistake, corrected. A good way to improve the quality of the application.

Finally.

The described functionality is the best that we could choose for our tasks. Flexibility, ease of administration, ease of use, saving events with objects in a structured way.

I hope this article will help other developers of desktop applications to choose their own version of the implementation of journaling.

Source: https://habr.com/ru/post/253367/

All Articles