Looking for perfect monitoring

In this short article I would like to talk about the monitoring tools used to analyze the work of our bank's DWH. The article will be of interest to all those who are not satisfied with the existing ready-made monitoring systems and who have been visited to collect such “for themselves” from separate pieces. Much attention is paid in the article to Grafana Dashboard, which, in my opinion, is unfairly deprived of attention on Habré. For most of the monitoring system components, the installation process will be briefly reviewed (under RedHat).

Warm lamp dashboards

Formulation of the problem

At first glance, the task is quite simple and trivial: to have a means by which the state of all systems of the repository can be as fully as possible with the smallest number of movements in the shortest time possible, and also be able to notify individual stakeholders about a particular event. However, here it is worthwhile to briefly talk about the features of the systems that need to be looked after:

')

Selection from existing

Of course, the first thing the eye fell on already existing systems: Nagios, Zabbix (to a greater extent), Munin. Each of them is good in its own way, each has its own areas of application. On Habré, there is enough information about each of the systems listed above, so I will list only some of the reasons for which we abandoned the finished system in principle:

Fig. 1 Graph in Zabbix. In all the considered ready-made systems, there are very few possibilities for working with graphics.

Also, if we want to plot a certain function of a metric (for example, a derivative or an exponent), the value of the function will have to be calculated on the side of the samopisny daemon that sends data.

In addition, Zabbix and Nagios are used in the bank for lower-level monitoring (iron status), and there was some experience of using them. In short - with all the fibers of the soul it was felt that this is not what we need.

Graphite

So, in the process of searching for a system capable of beautifully and clearly visualizing such a motley set of metrics, Graphite was discovered. The first impressions of the descriptions were only positive, plus a significant lecture from Yandex , in which the guys solved a rather similar task, made a significant contribution to the decision to deploy the test installation. So we started Graphite.

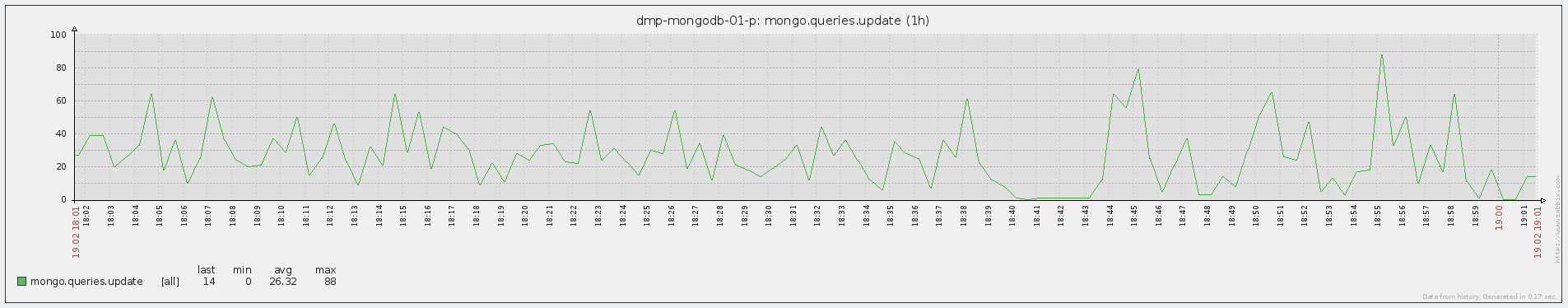

Fig. 2 Graphic Graphic

Graphite is nothing else but a system for drawing graphs online. Simplified - it just takes the metric over the network and puts a dot on the graph, which you can then access in one way or another. Metrics are sent in the “Folder1. Folder2. Name_metrics metric_value metric_timemark”. For example, the simplest sending of the Test metric, which is located in the Folder directory and currently has the value 1, from bash will look something like this:

Simple, isn't it?

Architecturally Graphite consists of three components:

What Graphite liked:

What disappointed:

Grafana

So, for a while we worked with graphite. A test and product installation appeared, metric references were added to the Duet control code, demons were written to monitor the state of Greenplum, an application was written to calculate and send Attunity latency to Graphite. Some kind of visual representation of the work of the systems appeared, for example, a major problem was identified according to the historical work schedules of Duet. Alerts were implemented by third-party code (bash, sas).

But there is no limit to perfection, and after some time we wanted more - so we went to the grafan.

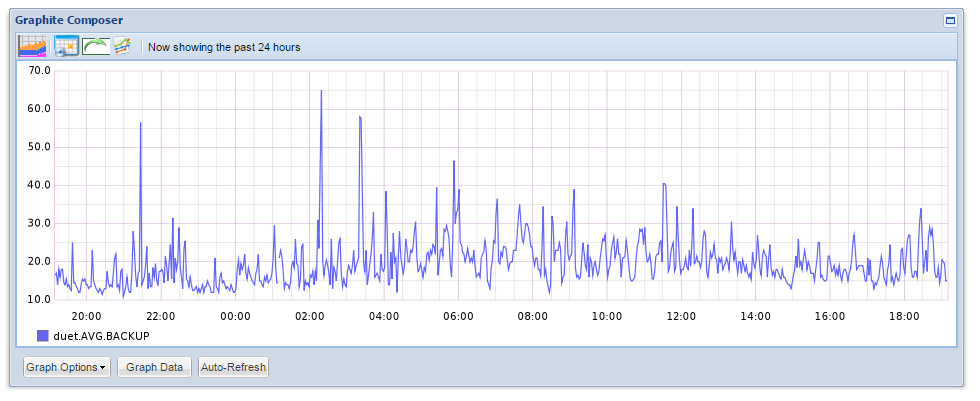

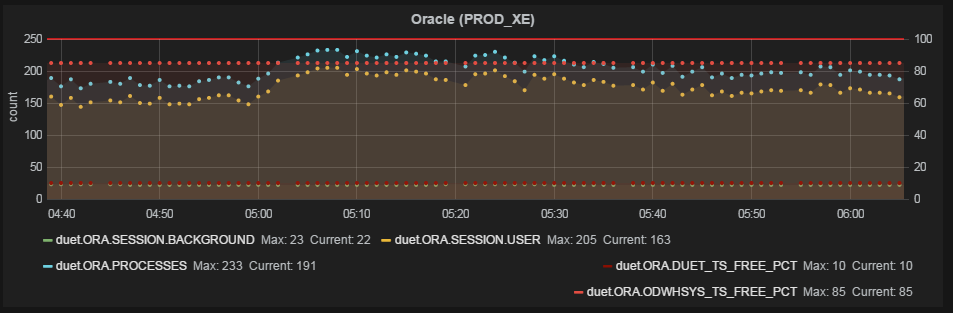

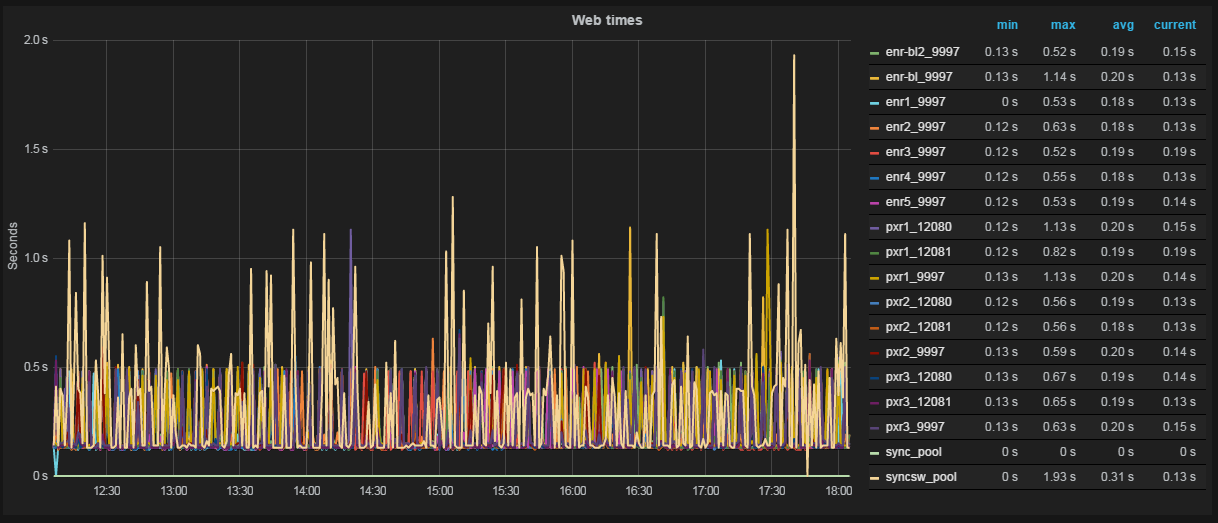

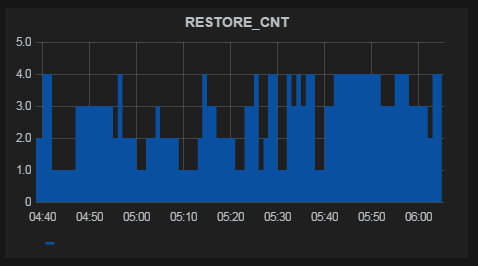

Fig. 3 Graph and table of values in Grafana

Grafana is a graph and dashboard editor based on data from Graphite, InfluxDB or OpenTSDB, which specializes in displaying and analyzing information. It is light, relatively easy to install, and most importantly, it is incredibly beautiful. On board:

Functionally, graphene is a set of user-defined dashboards, divided into lines of user-defined height, in which, in turn, you can create functional elements (graphs, html inserts and dies-triggers). Lines can be moved, renamed and generally mocked at all.

Naturally, the main functional elements of the dashboard are graphics. Creating a new metric graph in Grafan does not require any special knowledge at all - everything is done easily and clearly:

1. Metrics from Graphite are added to the graph - everything happens in the GUI, you can specify all the metrics in a particular folder, etc .;

2. If necessary, functions are applied to the metrics that are applied to the metrics before they are displayed. In addition to simple and frequently used (scale, average, percentiles ...) there are more interesting ones - for example, using the aliasByNode () function, graphan itself can determine exactly how to name the metrics on the graphs. A simple example, there are two metrics:

Servers.ServerA.cpu

Servers.ServerB.cpu

By default, metrics will be called the same - cpu. If you use the above function, ServerA and ServerB will be displayed on the graph;

3. Edit the axes (there is an autoscaling of most popular values — time, file size, speed), the name of the graph;

4. Adjust the display style (fill, line / columns, summation of metrics, table with values, etc.);

The schedule is ready. Optionally, add html-content to the dashboards and simple dice-triggers (attached to the same metric, change the color of the dice or the text on it, if the metric goes beyond the specified limits).

Thus, it is possible to fine-tune the display of any data in almost all details.

Advantages of Grafans:

Disadvantages:

By the way, in the version of grafana 2.0 it is planned to add both authorization and notifications.

Diamond

Well, you say, we have learned how to send metrics from the systems we need and display them on the graph as we need - but if in addition to our super-unique metrics, we also want to see values close to any administrator and Zabbix / Nagios user, such as cpu usage, iops, network usage - for each such metric, you also have to write your demon on bash and stuff it into cron ?! Of course not - there is a Diamond .

Diamond is a daemon written in python that collects and sends system information to graphite and several other systems. Functionally, it consists of collectors — individual daemons-parsers that extract information from the system. In fact, collectors covered the whole range of objects for monitoring - from cpu to mongoDB.

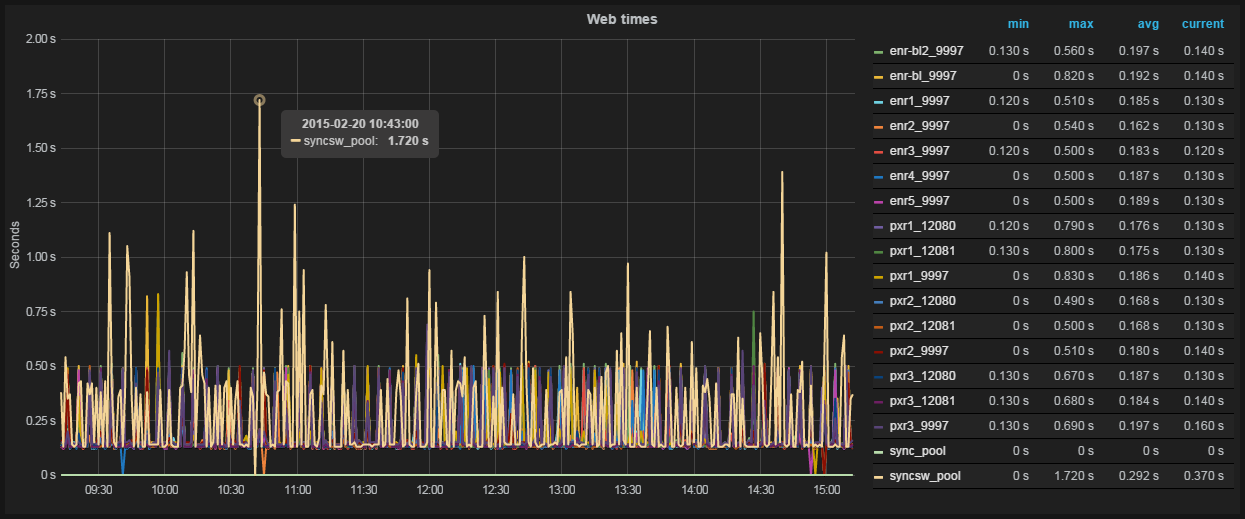

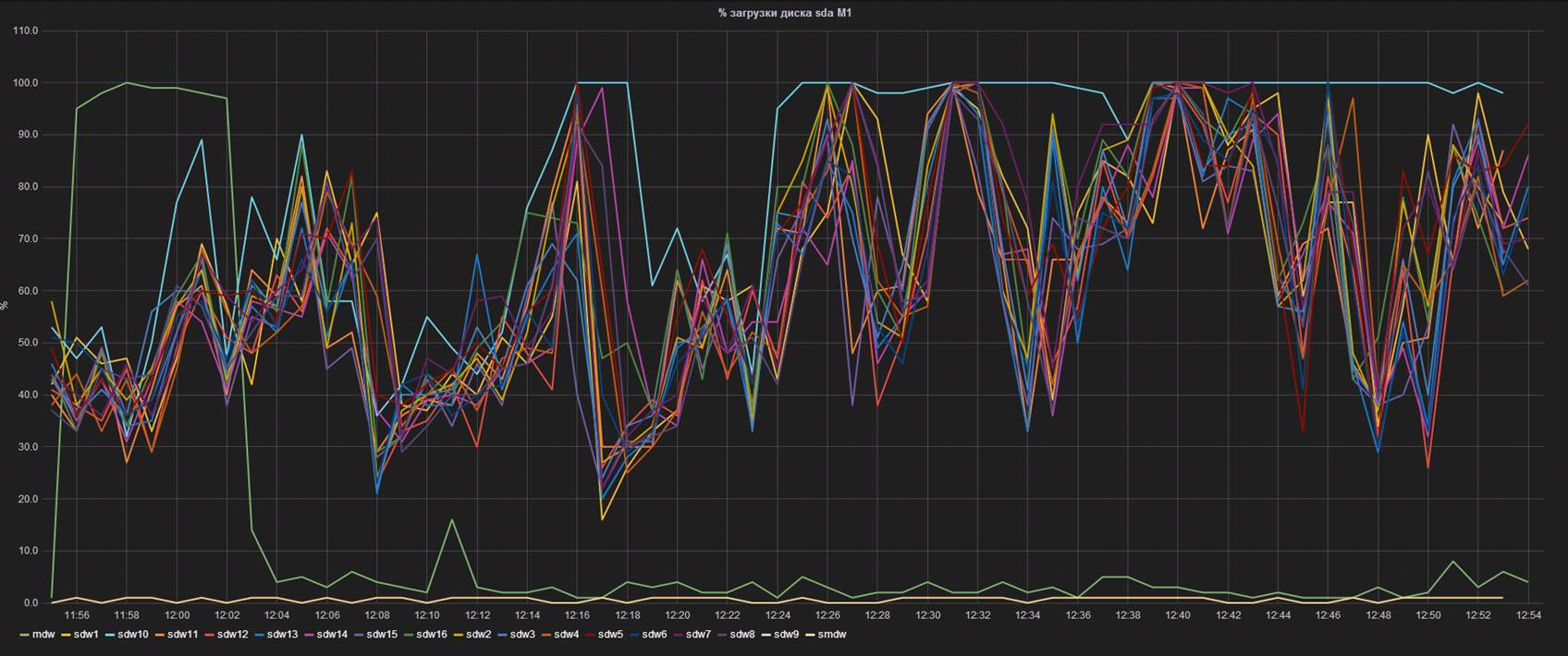

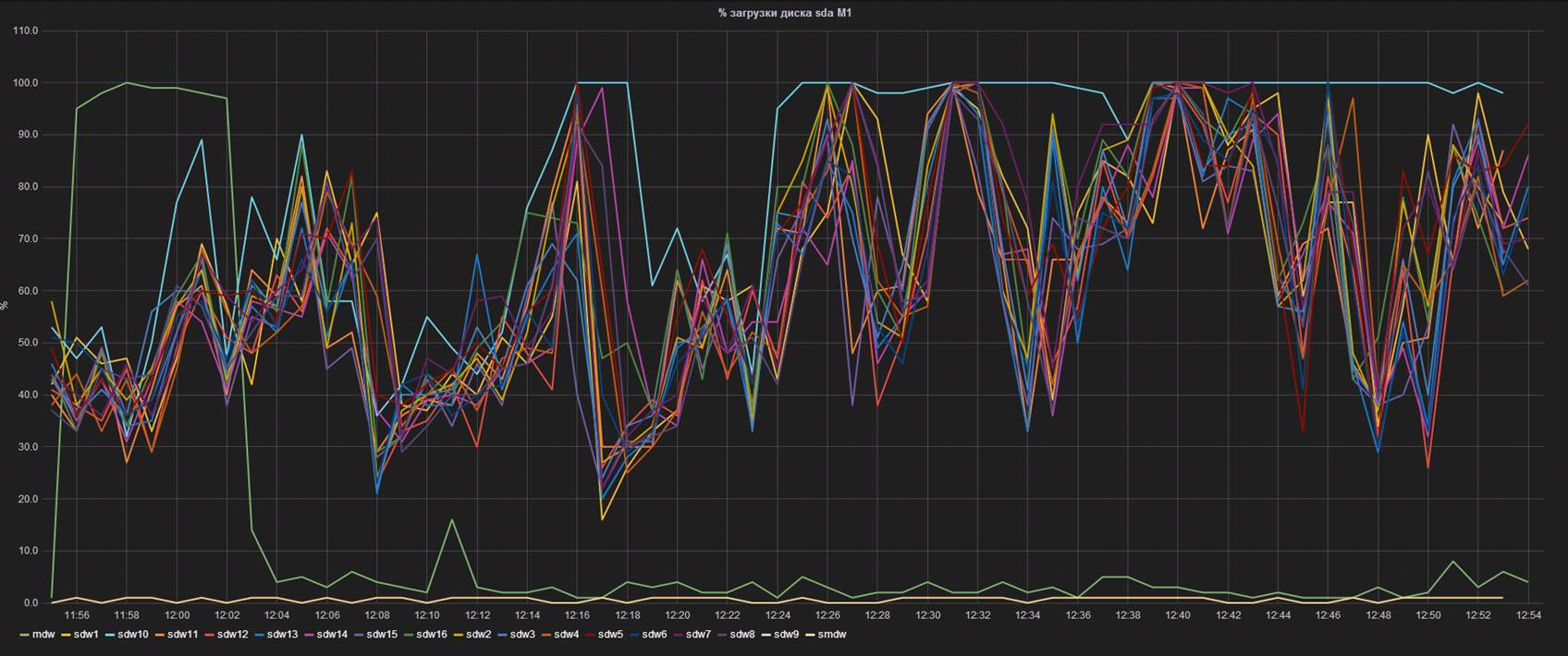

Fig. 4 The performance of one of the server segments is knocked out of the total heap.

Here, each line represents the% of the controller's workload on one of the database segment servers. It can be seen that the segment named sdw10, with equal IOPS (seen on the adjacent graph), is much more loaded. The knowledge of this feature allowed us, firstly, to prepare in advance for the replacement, and secondly, to begin negotiations with the vendor about a possible early replacement of the disks upon the performance decline.

Seyren

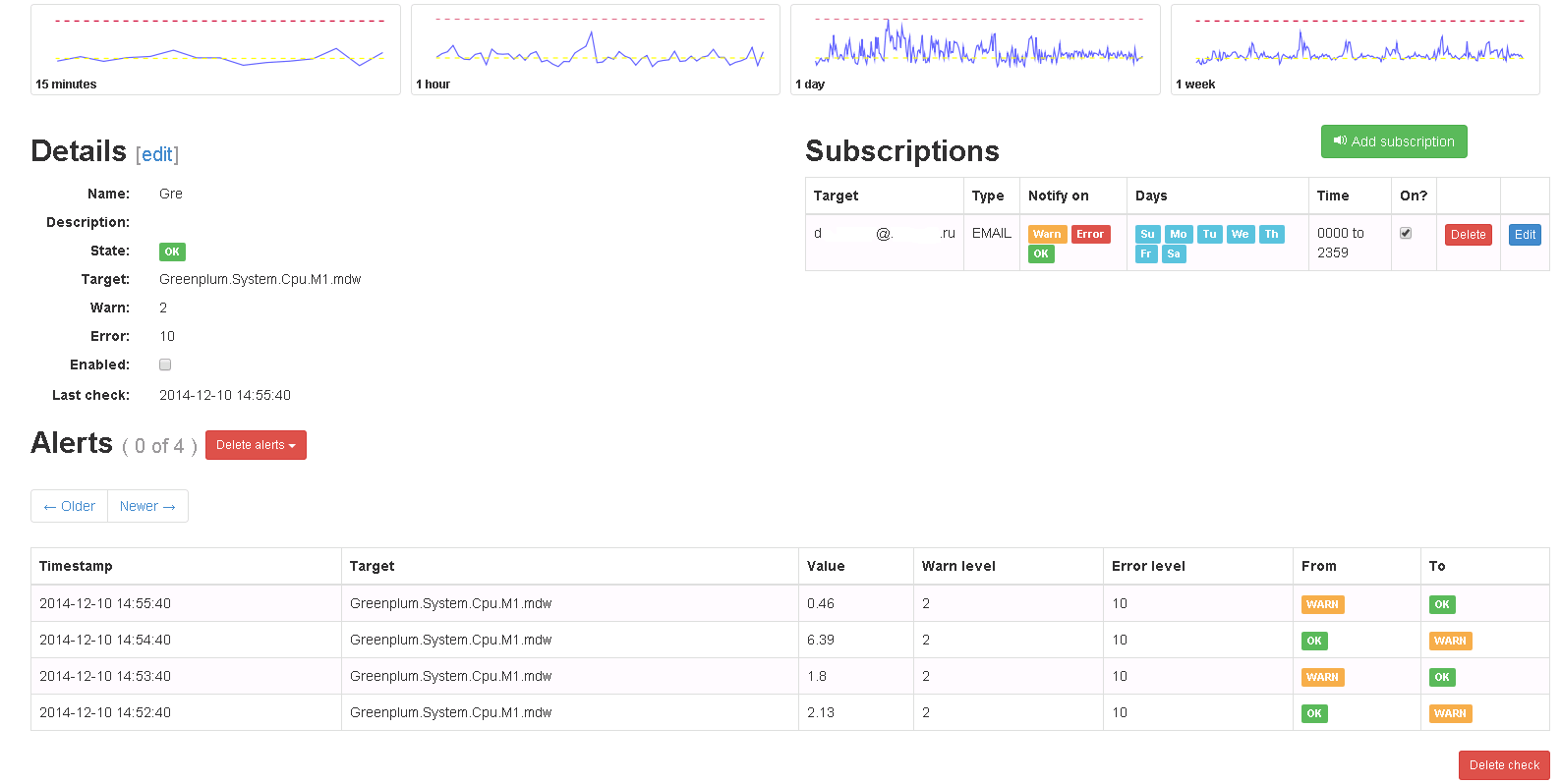

So, the main problem - visualization and data acquisition - has been solved. We have beautiful, flexible dashboards with the display of current and historical information, we are able to send metrics from different systems and easily add new system indicators. The only thing left is to learn how to notify interested persons about a particular event. This is where Seyren comes to help us - a small and very simple dashboard that can notify users in one way or another when one of their metrics goes into a new state. In practice, this means the following: the user creates a check on a specific metric from Graphite and sets two limits in it - WARN and ERROR levels. Then it adds subscribers, specifies the channel with which they need to be notified (Email, Flowdock, HipChat, HTTP, Hubot, IRCcat, PagerDuty, Pushover, SLF4J, Slack, SNMP, Twilio) and the schedule of alerts (for example, notify only on weekdays).

Fig. 5 Check in Seyren

Later, when the metric reaches one of the limits, alerts are sent indicating the criticality. When the metric is returned to its previous state, the same thing happens.

It is necessary to note one not obvious point: in order for Seyren to pick up the metric from Graphite, you must completely, taking into account the register, enter its name when creating the test.

As you can see, the alerts are quite simple and respond only to a linear change in the metric - in our case, this is sufficient. With the help of Seyren, it will not be possible to create an alert that, for example, responds to a change in the average value over the last half hour. For those who need such checks, I recommend to pay attention to Cabot - a complete analogue of Seyren, which has the ability to create checks on the result of applying functions to Graphite metrics. Cabot is distributed as an instance for AWS or DigitalOcean, but it also describes how to perform a bare-metal installation on CentOS.

Conclusion

In total, at the output we have an almost complete monitoring system, which in many ways surpasses the existing “solid” solutions. In addition, the integration of new services in such a system will be much more flexible (although it may be more time consuming), and the extensibility, taking into account the number of software working with Graphite, is almost unlimited.

Links

Graphite

Graphite - how to build a million graphs - record with Yet another conference 2013

Grafana

Diamond

Seyren

Cabot

Cabot installation instructions on CentOS

Our article on disaster recovery for Greenplum

Our article about Attunity CDC

Warm lamp dashboards

Formulation of the problem

At first glance, the task is quite simple and trivial: to have a means by which the state of all systems of the repository can be as fully as possible with the smallest number of movements in the shortest time possible, and also be able to notify individual stakeholders about a particular event. However, here it is worthwhile to briefly talk about the features of the systems that need to be looked after:

- MPP RDBMS Greenplum - 4 clusters of 10-18 (depending on the destination) machines in each. Of all the machines in the cluster, only two machines (the master and the standby-master) have access to the external network, the rest are only in the internal, isolated interconnect network;

- Dual-ETL (Duet) - more details about this project can be found in the next article . From the point of view of monitoring, the project is interesting in that the necessary metrics are generated in a large number of different environments: bash scripts, SAS code, Oracle database and Greenplum;

- Attunity CDC - details can also be found in the next article . The key indicator of this system is latency, that is, the time elapsed between the appearance / change of a record in the source database (Oracle) and a similar event in the receiver (Greenplum);

- ETL processes and the hardware used in them - it is necessary to maximally highlight the processes of loading and converting data;

- 18 web services for various purposes - the simplest task. The customer wanted to have an idea about the response time of the service and the time of its availability;

- Several other simple metrics.

')

Selection from existing

Of course, the first thing the eye fell on already existing systems: Nagios, Zabbix (to a greater extent), Munin. Each of them is good in its own way, each has its own areas of application. On Habré, there is enough information about each of the systems listed above, so I will list only some of the reasons for which we abandoned the finished system in principle:

- The metrics that we would like to monitor are fairly “highly specialized” and have little in common with typical areas of application for off-the-shelf systems (iron loading, network bandwidth, etc.). This means that in any case, for each of the systems, you would have to write your own demons, sending data to the master system, and this breaks the whole concept of ready-made monitoring systems;

- The main purpose of such systems is to notify about problems, for us the main thing is to visualize the operation of components, and already the second task is to alert;

- Forgive me fans of the above systems, but they are ... ugly. They do not want to open on two monitors, select different display styles, calculate averages, derivatives, print and discuss, scale and drag and drop, superimpose graphics on each other and look for hidden meaning.

Fig. 1 Graph in Zabbix. In all the considered ready-made systems, there are very few possibilities for working with graphics.

Also, if we want to plot a certain function of a metric (for example, a derivative or an exponent), the value of the function will have to be calculated on the side of the samopisny daemon that sends data.

In addition, Zabbix and Nagios are used in the bank for lower-level monitoring (iron status), and there was some experience of using them. In short - with all the fibers of the soul it was felt that this is not what we need.

Graphite

So, in the process of searching for a system capable of beautifully and clearly visualizing such a motley set of metrics, Graphite was discovered. The first impressions of the descriptions were only positive, plus a significant lecture from Yandex , in which the guys solved a rather similar task, made a significant contribution to the decision to deploy the test installation. So we started Graphite.

Fig. 2 Graphic Graphic

Graphite is nothing else but a system for drawing graphs online. Simplified - it just takes the metric over the network and puts a dot on the graph, which you can then access in one way or another. Metrics are sent in the “Folder1. Folder2. Name_metrics metric_value metric_timemark”. For example, the simplest sending of the Test metric, which is located in the Folder directory and currently has the value 1, from bash will look something like this:

echo "Folder.Test 1 $(date +%s)" |nc graphite_host 2003 Simple, isn't it?

Architecturally Graphite consists of three components:

- Graphite-Web, Django web-shell, also rendered graphs, also uses mysql in its work;

- Carbon, the daemon for receiving, caching and writing Whisper incoming metrics;

- Whisper, a file database for storing historical metrics data. Thus, metrics are stored in separate files - by file per metric, which makes it quite convenient to manage these metrics (transfer between installations, delete, etc.)

What Graphite liked:

- all charts in one place, sorted into folders at the discretion of the user, and not by the name of servers or server groups (hello to Zabbix);

- The time stamp for each metric is set arbitrarily, that is, points on the graph can be set both in the past and in the future tense - in some components of the repository this is important;

- data on the graphs can impose functions;

- several nice graphic buns - filling, displaying columns and so on;

- slightly more convenient scaling.

What disappointed:

- fairly scarce opportunities for creating dashboards;

- many nuances lack interactivity;

- No alert functionality in principle;

- There is no possibility to look at the table of values of metrics.

Graphite installation

# # , , , # #Graphite mysql – , Graphite yum --nogpgcheck install graphite-web graphite-web-selinux mysql mysql-server MySQL-python python-carbon python-whisper service mysqld start /usr/bin/mysql_secure_installation # , mysql - /etc/graphite-web/local_settings.py: DATABASES = { 'default': { 'NAME': 'graphite', 'ENGINE': 'django.db.backends.mysql', 'USER': 'graphite', 'PASSWORD': 'your_pass', 'HOST': 'localhost', 'PORT': '3306', } } mysql -e "CREATE USER 'graphite'@'localhost' IDENTIFIED BY 'your_pass';" -u root -p # , mysql -e "GRANT ALL PRIVILEGES ON graphite.* TO 'graphite'@'localhost';" -u root -p # mysql -e "CREATE DATABASE graphite;" -u root -p # mysql -e 'FLUSH PRIVILEGES;' -u root -p # /usr/lib/python2.6/site-packages/graphite/manage.py syncdb service carbon-cache start service httpd start # 80- Graphite. /etc/carbon/storage-schemas.conf ( () () ) Grafana

So, for a while we worked with graphite. A test and product installation appeared, metric references were added to the Duet control code, demons were written to monitor the state of Greenplum, an application was written to calculate and send Attunity latency to Graphite. Some kind of visual representation of the work of the systems appeared, for example, a major problem was identified according to the historical work schedules of Duet. Alerts were implemented by third-party code (bash, sas).

But there is no limit to perfection, and after some time we wanted more - so we went to the grafan.

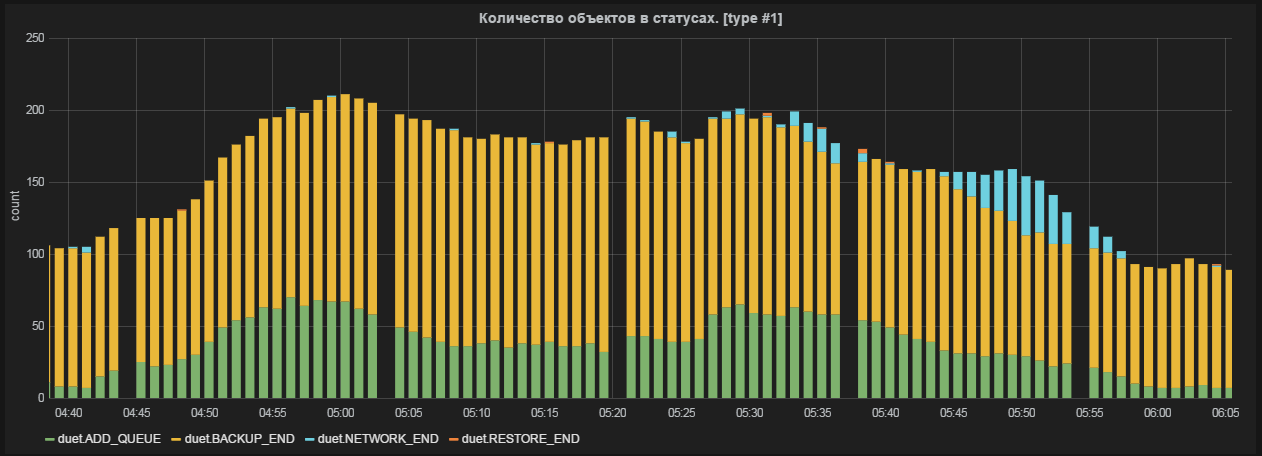

Fig. 3 Graph and table of values in Grafana

Grafana is a graph and dashboard editor based on data from Graphite, InfluxDB or OpenTSDB, which specializes in displaying and analyzing information. It is light, relatively easy to install, and most importantly, it is incredibly beautiful. On board:

- full dashboards with graphs, triggers, html inserts and other buns;

- scrolling, zooming and other -ing;

- sortable value tables (min, max, avg, current, total);

- support all functions of graphite.

Functionally, graphene is a set of user-defined dashboards, divided into lines of user-defined height, in which, in turn, you can create functional elements (graphs, html inserts and dies-triggers). Lines can be moved, renamed and generally mocked at all.

Naturally, the main functional elements of the dashboard are graphics. Creating a new metric graph in Grafan does not require any special knowledge at all - everything is done easily and clearly:

1. Metrics from Graphite are added to the graph - everything happens in the GUI, you can specify all the metrics in a particular folder, etc .;

2. If necessary, functions are applied to the metrics that are applied to the metrics before they are displayed. In addition to simple and frequently used (scale, average, percentiles ...) there are more interesting ones - for example, using the aliasByNode () function, graphan itself can determine exactly how to name the metrics on the graphs. A simple example, there are two metrics:

Servers.ServerA.cpu

Servers.ServerB.cpu

By default, metrics will be called the same - cpu. If you use the above function, ServerA and ServerB will be displayed on the graph;

3. Edit the axes (there is an autoscaling of most popular values — time, file size, speed), the name of the graph;

4. Adjust the display style (fill, line / columns, summation of metrics, table with values, etc.);

A few examples

The schedule is ready. Optionally, add html-content to the dashboards and simple dice-triggers (attached to the same metric, change the color of the dice or the text on it, if the metric goes beyond the specified limits).

Thus, it is possible to fine-tune the display of any data in almost all details.

Advantages of Grafans:

- flexibility - everything is configured;

- usability - it is as comfortable as it is beautiful;

- Before displaying a metric, you can apply mathematical / statistical functions to it;

- calculation of charts takes place on the client side (although in some situations this can be attributed to shortcomings);

Disadvantages:

- no authorization - anyone can change your schedules and dashboards;

- no alerts;

By the way, in the version of grafana 2.0 it is planned to add both authorization and notifications.

Install grafana

#: elasticsearch-1.1.1 #http://www.elasticsearch.org/downloads/1-1-1/ grafana-1.9.1.tar #http://grafana.org/download/, nohup ./elasticsearch & # elasticsearch , mkdir /opt/grafana cp grafana-1.9.1/* /opt/grafana cp config.sample.js config.js vi config.js # grafana , graphite, : graphite: { type: 'graphite', url: "http://"+window.location.hostname+":80", default: true }, vi /etc/httpd/conf.d/graphite-web.conf: Header set Access-Control-Allow-Origin "*" Header set Access-Control-Allow-Methods "GET, OPTIONS" Header set Access-Control-Allow-Headers "origin, authorization, accept" vi /etc/httpd/conf.d/grafana.conf: Listen 8080 <VirtualHost *:8080> DocumentRoot /opt/grafana/ </VirtualHost> service httpd restart Diamond

Well, you say, we have learned how to send metrics from the systems we need and display them on the graph as we need - but if in addition to our super-unique metrics, we also want to see values close to any administrator and Zabbix / Nagios user, such as cpu usage, iops, network usage - for each such metric, you also have to write your demon on bash and stuff it into cron ?! Of course not - there is a Diamond .

Diamond is a daemon written in python that collects and sends system information to graphite and several other systems. Functionally, it consists of collectors — individual daemons-parsers that extract information from the system. In fact, collectors covered the whole range of objects for monitoring - from cpu to mongoDB.

Awesome full list of collectors

Each collector, in turn, processes and sends several metrics to the receiving system (in our case, Graphite). For example, in the case of a network-collector, as many as 18 metrics are sent for each network interface. Thus, in our monitoring system, it seems to me, the main functionality of Zabbix and Nagios is covered - adding new system metrics in one or two clicks. In particular, the small but bright administration experience of Greenplum suggested that a very important parameter of the database operation is the load on the disk subsystem on the server segments. A separate dashboard was created with iops charts,% load, await, and service time of the RAID controllers of the server segments (using / proc / diskstats). A short observation revealed an interesting pattern: before the disk in the controller gave the ends and the state control utility of the RAID controller reported on the necessary disk replacement, the performance of the entire array sagged strongly:AmavisCollector

ApcupsdCollector

BeanstalkdCollector

BindCollector

CPUCollector

CassandraJolokiaCollector

CelerymonCollector

CephCollector

CephStatsCollector

ChronydCollector

ConnTrackCollector

CpuAcctCgroupCollector

DRBDCollector

DarnerCollector

DiskSpaceCollector

DiskUsageCollector

DropwizardCollector

DseOpsCenterCollector

ElasticSearchCollector

ElbCollector

EndecaDgraphCollector

EntropyStatCollector

ExampleCollector

Eximcollector

FilesCollector

FilestatCollector

FlumeCollector

GridEngineCollector

HAProxyCollector

HBaseCollector

HTTPJSONCollector

HadoopCollector

HttpCollector

Httpdcollector

IODriveSNMPCollector

IPCollector

IPMISensorCollector

IPVSCollector

IcingaStatsCollector

InterruptCollector

JCollectdCollector

JbossApiCollector

JolokiaCollector

KSMCollector

KVMCollector

KafkaCollector

LMSensorsCollector

LibvirtKVMCollector

LoadAverageCollector

MemcachedCollector

MemoryCgroupCollector

MemoryCollector

MemoryDockerCollector

MemoryLxcCollector

MongoDBCollector

Monitcollector

MountStatsCollector

MySQLCollector

MySQLPerfCollector

NagiosPerfdataCollector

NagiosStatsCollector

NetAppCollector

NetscalerSNMPCollector

NetworkCollector

NfsCollector

NfsdCollector

NginxCollector

NtpdCollector

NumaCollector

OneWireCollector

OpenLDAPCollector

OpenVPNCollector

OpenstackSwiftCollector

OpenstackSwiftReconCollector

OssecCollector

PassengerCollector

PgbouncerCollector

PhpFpmCollector

PingCollector

PostfixCollector

PostgresqlCollector

PostqueueCollector

PowerDNSCollector

ProcessResourcesCollector

ProcessStatCollector

PuppetAgentCollector

PuppetDBCollector

PuppetDashboardCollector

RabbitMQCollector

RedisCollector

ResqueWebCollector

S3BucketCollector

SNMPCollector

SNMPInterfaceCollector

SNMPRawCollector

ServerTechPDUCollector

SidekiqWebCollector

SlabInfoCollector

SmartCollector

Sockstatcollector

SoftInterruptCollector

SolrCollector

SqsCollector

Squidcollector

SupervisordCollector

TCPCollector

TokuMXCollector

UDPCollector

UPSCollector

UnboundCollector

UptimeCollector

UserScriptsCollector

UsersCollector

VMSDomsCollector

VMSFSCollector

VMStatCollector

VarnishCollector

WebsiteMonitorCollector

XENCollector

ZookeeperCollector

ApcupsdCollector

BeanstalkdCollector

BindCollector

CPUCollector

CassandraJolokiaCollector

CelerymonCollector

CephCollector

CephStatsCollector

ChronydCollector

ConnTrackCollector

CpuAcctCgroupCollector

DRBDCollector

DarnerCollector

DiskSpaceCollector

DiskUsageCollector

DropwizardCollector

DseOpsCenterCollector

ElasticSearchCollector

ElbCollector

EndecaDgraphCollector

EntropyStatCollector

ExampleCollector

Eximcollector

FilesCollector

FilestatCollector

FlumeCollector

GridEngineCollector

HAProxyCollector

HBaseCollector

HTTPJSONCollector

HadoopCollector

HttpCollector

Httpdcollector

IODriveSNMPCollector

IPCollector

IPMISensorCollector

IPVSCollector

IcingaStatsCollector

InterruptCollector

JCollectdCollector

JbossApiCollector

JolokiaCollector

KSMCollector

KVMCollector

KafkaCollector

LMSensorsCollector

LibvirtKVMCollector

LoadAverageCollector

MemcachedCollector

MemoryCgroupCollector

MemoryCollector

MemoryDockerCollector

MemoryLxcCollector

MongoDBCollector

Monitcollector

MountStatsCollector

MySQLCollector

MySQLPerfCollector

NagiosPerfdataCollector

NagiosStatsCollector

NetAppCollector

NetscalerSNMPCollector

NetworkCollector

NfsCollector

NfsdCollector

NginxCollector

NtpdCollector

NumaCollector

OneWireCollector

OpenLDAPCollector

OpenVPNCollector

OpenstackSwiftCollector

OpenstackSwiftReconCollector

OssecCollector

PassengerCollector

PgbouncerCollector

PhpFpmCollector

PingCollector

PostfixCollector

PostgresqlCollector

PostqueueCollector

PowerDNSCollector

ProcessResourcesCollector

ProcessStatCollector

PuppetAgentCollector

PuppetDBCollector

PuppetDashboardCollector

RabbitMQCollector

RedisCollector

ResqueWebCollector

S3BucketCollector

SNMPCollector

SNMPInterfaceCollector

SNMPRawCollector

ServerTechPDUCollector

SidekiqWebCollector

SlabInfoCollector

SmartCollector

Sockstatcollector

SoftInterruptCollector

SolrCollector

SqsCollector

Squidcollector

SupervisordCollector

TCPCollector

TokuMXCollector

UDPCollector

UPSCollector

UnboundCollector

UptimeCollector

UserScriptsCollector

UsersCollector

VMSDomsCollector

VMSFSCollector

VMStatCollector

VarnishCollector

WebsiteMonitorCollector

XENCollector

ZookeeperCollector

Fig. 4 The performance of one of the server segments is knocked out of the total heap.

Here, each line represents the% of the controller's workload on one of the database segment servers. It can be seen that the segment named sdw10, with equal IOPS (seen on the adjacent graph), is much more loaded. The knowledge of this feature allowed us, firstly, to prepare in advance for the replacement, and secondly, to begin negotiations with the vendor about a possible early replacement of the disks upon the performance decline.

Diamond installation

yum install build-essentials python-dev python python-devel psutil python-configobj # https://github.com/python-diamond/Diamond, , , make install #, cp /etc/diamond/diamond.conf.example /etc/diamond/diamond.conf # vi /etc/diamond/diamond.conf # grahit', / , graphite , , # /usr/share/diamond/collectors/ , , diamond-setup # , /etc/init.d/diamond start # Seyren

So, the main problem - visualization and data acquisition - has been solved. We have beautiful, flexible dashboards with the display of current and historical information, we are able to send metrics from different systems and easily add new system indicators. The only thing left is to learn how to notify interested persons about a particular event. This is where Seyren comes to help us - a small and very simple dashboard that can notify users in one way or another when one of their metrics goes into a new state. In practice, this means the following: the user creates a check on a specific metric from Graphite and sets two limits in it - WARN and ERROR levels. Then it adds subscribers, specifies the channel with which they need to be notified (Email, Flowdock, HipChat, HTTP, Hubot, IRCcat, PagerDuty, Pushover, SLF4J, Slack, SNMP, Twilio) and the schedule of alerts (for example, notify only on weekdays).

Fig. 5 Check in Seyren

Later, when the metric reaches one of the limits, alerts are sent indicating the criticality. When the metric is returned to its previous state, the same thing happens.

It is necessary to note one not obvious point: in order for Seyren to pick up the metric from Graphite, you must completely, taking into account the register, enter its name when creating the test.

Install seyren

# Seyren MongoDB, sudo yum install mongodb-org sudo service mongod start # https://github.com/scobal/seyren/releases/download/1.1.0/seyren-1.1.0.jar mkdir /var/log/seyren # export SEYREN_LOG_PATH=/var/log/seyren/ # seyren . export GRAPHITE_URL="http://grahite_host:80" # graphite export SMTP_FROM=seyren@domain.com # nohup java -jar seyren-1.1.0.jar & # As you can see, the alerts are quite simple and respond only to a linear change in the metric - in our case, this is sufficient. With the help of Seyren, it will not be possible to create an alert that, for example, responds to a change in the average value over the last half hour. For those who need such checks, I recommend to pay attention to Cabot - a complete analogue of Seyren, which has the ability to create checks on the result of applying functions to Graphite metrics. Cabot is distributed as an instance for AWS or DigitalOcean, but it also describes how to perform a bare-metal installation on CentOS.

Conclusion

In total, at the output we have an almost complete monitoring system, which in many ways surpasses the existing “solid” solutions. In addition, the integration of new services in such a system will be much more flexible (although it may be more time consuming), and the extensibility, taking into account the number of software working with Graphite, is almost unlimited.

Links

Graphite

Graphite - how to build a million graphs - record with Yet another conference 2013

Grafana

Diamond

Seyren

Cabot

Cabot installation instructions on CentOS

Our article on disaster recovery for Greenplum

Our article about Attunity CDC

Source: https://habr.com/ru/post/252907/

All Articles