Load balancing with LVS

So, you have a loaded server and you suddenly wanted to unload it. You put and poured the same (same), but users persistently go to the first. In this case, of course, you need to think about load balancing.

The first thing that comes to mind is using the Round-robin DNS option. If anyone does not know, this is a method that allows you to blur requests between the n-th number of servers simply by giving a new IP to each DNS request.

')

What are the cons:

Although it is not worth adding between the input and the output “too much computer”, I want some methods of control over the situation.

And here Linux Virtual Server or LVS comes to our rescue. In fact, this is a kernel module ( ipvs ), existing somewhere else from version 2.0-2.2.

What is he like? In fact, this is an L4 router (I would say that L3, but the authors insist on L4), which allows for a transparent and manageable handling of packets along specified routes.

The basic terminology is as follows:

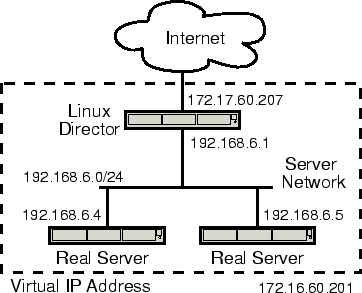

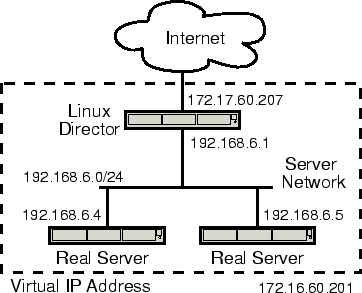

On the director, this same IPVS module (IP Virtual Server) is enabled, the rules for packet forwarding are configured and the VIP is raised - usually as an alias to the external interface. Users will walk through the VIP.

Packages that come to the VIP are forwarded by the selected method to one of the Realservers and are already normally processed there. It seems to the client that he works with one machine.

The rules for packet forwarding are extremely simple: we define a virtual service defined by the VIP: port pair. The service can be TCP or UDP. Here we also set the node rotation method (scheduler, scheduler). Next, we define a set of servers from our farm, as well as a pair of RIP: port, and also specify the packet forwarding method and weight, if required by the selected scheduler.

It looks something like this.

Yes, do not forget to put the ipvsadmin package, it should be in the repository of your distribution. In any case, it is in Debian and RedHat.

In the example above, we create a virtual HTTP service 192.168.100.100 and include servers 127.0.0.1, 192.168.100.2 and 192.168.100.3 in it. The "-w" key sets the server weight. The higher it is, the more likely it will receive a request. If we set the weight to 0, then the server will be excluded from all operations. Very handy if you need to decommission the server.

The default packets are forwarded by the DR method. In general, there are the following options for routing:

DR is the easiest, but if, for example, you need to change the destination port, you will have to draw rules in iptables. NAT, on the other hand, requires the default for the entire farm to be directed to the director, which is not always convenient, especially if real servers also have external addresses.

There are several planners in the delivery package, you can read in detail in the instructions. Consider only the main ones.

In addition, it can be reported that the service requires persistence, i.e. keeping the user on one of the servers for a given period of time - all new requests from the same IP will be transferred to the same server.

So, according to the example above, we should have received a virtual server on VIP 192.168.100.100, which ipvsadm happily reports to us:

However, when trying to connect, nothing will happen! What is the matter? The first step is to raise the alias.

But even here failure is waiting for us - the packet arrives at the real server interface unmodified and therefore stupidly replays the kernel as not intended for the machine. The easiest way to resolve this issue is to raise the VIP to the loopback.

In no case do not raise it on the interface, which looks at the same subnet as the external interface of the director. Otherwise, the external router can cache the mac of the wrong machine and all traffic will go wrong.

Now the bags should run where necessary. By the way, the director himself can be a real server, which we see in our example.

In this decision, the director himself will be the obvious point of failure causing the destruction of the entire service.

Well, it does not matter, ipvsadm supports launching in daemon mode with the ability to synchronize tables and current connections between several directors. One will obviously become a master, the rest will be slaves.

What remains to us? Move VIP between directors in case of failure. Here HA solutions like heartbeat will help us.

Another task will be monitoring and timely decommissioning of servers from our farm. The easiest way to do this is with weights.

For settling both questions a lot of solutions are written, sharpened for different types of services. I personally liked the Ultramonkey solution most of all, from the LVS authors themselves.

RedHat has a native thing called Piranha, it has its own set of daemons for monitoring the farm and directors, and even some kind of ragged web interface, but it does not support more than 2 directors in the HA bundle. I do not know why.

So, Ultramonkey consists of 2 main packages - heartbeat and ldirectord. The first is engaged in providing HA for directors, including raising and moving VIP (and generally speaking can be used not only for directors), and the second maintains the ipvsadm config and monitors the state of the farm.

For heartbeat, you need to draw 3 config. Basic versions are provided with detailed comments, so just give examples.

authkeys

We configure the authorization of demons on different machines.

haresources

Here we have information about which resource we are creating HA for and what services we pull when moving.

Those. raise on interface eth0 192.168.100.100/24 and run ldirectord.

ha.cf

We say how to maintain the HA cluster and who actually belongs to it.

Ldirectord has only one config and there, in general, everything is also clear.

Those. ldirectord will jerk every 2 seconds through the http file alive.html and if it doesn’t have the line “I'm alive!” or, worse, the server doesn’t respond, the demon will immediately put it weight 0 and it will be excluded from subsequent shipments.

Weights can also be arranged by yourself, for example, by going over the field with a crown and calculating them depending on the current loadavg, etc. - no one takes away direct access to ipvsadm from you.

Although everywhere on the Internet, LVS web server balancing is generally considered as the scope of application, in fact they can balance a great many services. If the protocol is held on one port and has no state, then it can be balanced without any problems. The situation is more complicated with multiport protocols like samba and ftp, but there are also solutions.

By the way, as a real server does not have to be Linux. It can be almost any OS with a TCP / IP stack.

There is also a so-called L7 router, which no longer operates with ports, but with knowledge of high-level protocols. Fellows Japanese are developing Ultramonkey-L7 for this occasion. But now we will not touch him.

What balancing solutions do you use?

I will welcome any comments and comments.

DNS-RR

The first thing that comes to mind is using the Round-robin DNS option. If anyone does not know, this is a method that allows you to blur requests between the n-th number of servers simply by giving a new IP to each DNS request.

')

What are the cons:

- It is difficult to manage: you have scored a group of IP addresses and everything, no weight management for you, the status of servers is not monitored, etc.

- In fact, you smear requests across the IP range, but do not balance the load on the servers

- Client DNS caching can break entire raspberries

Although it is not worth adding between the input and the output “too much computer”, I want some methods of control over the situation.

Lvs

And here Linux Virtual Server or LVS comes to our rescue. In fact, this is a kernel module ( ipvs ), existing somewhere else from version 2.0-2.2.

What is he like? In fact, this is an L4 router (I would say that L3, but the authors insist on L4), which allows for a transparent and manageable handling of packets along specified routes.

The basic terminology is as follows:

- Director - the actual routing node.

- Realserver is a workhorse, node of our server farm.

- VIP or Virtual IP - just the IP of our virtual (collected from a heap of real) server.

- Accordingly, DIP and RIP are IP director and real servers.

On the director, this same IPVS module (IP Virtual Server) is enabled, the rules for packet forwarding are configured and the VIP is raised - usually as an alias to the external interface. Users will walk through the VIP.

Packages that come to the VIP are forwarded by the selected method to one of the Realservers and are already normally processed there. It seems to the client that he works with one machine.

rules

The rules for packet forwarding are extremely simple: we define a virtual service defined by the VIP: port pair. The service can be TCP or UDP. Here we also set the node rotation method (scheduler, scheduler). Next, we define a set of servers from our farm, as well as a pair of RIP: port, and also specify the packet forwarding method and weight, if required by the selected scheduler.

It looks something like this.

# ipvsadm -A -t 192.168.100.100:80 -s wlc

# ipvsadm -a -t 192.168.100.100:80 -r 192.168.100.2:80 -w 3

# ipvsadm -a -t 192.168.100.100:80 -r 192.168.100.3:80 -w 2

# ipvsadm -a -t 192.168.100.100:80 -r 127.0.0.1:80 -w 1Yes, do not forget to put the ipvsadmin package, it should be in the repository of your distribution. In any case, it is in Debian and RedHat.

In the example above, we create a virtual HTTP service 192.168.100.100 and include servers 127.0.0.1, 192.168.100.2 and 192.168.100.3 in it. The "-w" key sets the server weight. The higher it is, the more likely it will receive a request. If we set the weight to 0, then the server will be excluded from all operations. Very handy if you need to decommission the server.

The default packets are forwarded by the DR method. In general, there are the following options for routing:

- Direct Routing (gatewaying) - the packet is sent directly to the farm, unchanged.

- NAT (masquarading) is just a tricky NAT mechanism.

- IPIP incapsulation (tunneling) - tunneling.

DR is the easiest, but if, for example, you need to change the destination port, you will have to draw rules in iptables. NAT, on the other hand, requires the default for the entire farm to be directed to the director, which is not always convenient, especially if real servers also have external addresses.

There are several planners in the delivery package, you can read in detail in the instructions. Consider only the main ones.

- Round Robin - familiar to all collective responsibility.

- Weighted Round Robin is the same, but using server weights.

- Least Connection - we send the package to the server with the least number of connections.

- Weighted Least Connection is the same, but with weights.

In addition, it can be reported that the service requires persistence, i.e. keeping the user on one of the servers for a given period of time - all new requests from the same IP will be transferred to the same server.

Underwater rocks

So, according to the example above, we should have received a virtual server on VIP 192.168.100.100, which ipvsadm happily reports to us:

ipvsadm -L -n

IP Virtual Server version 1.0.7 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.100.100:80 wlc

-> 192.168.100.2:80 Route 3 0 0

-> 192.168.100.3:80 Route 2 0 0

-> 127.0.0.1:80 Local 1 0 0However, when trying to connect, nothing will happen! What is the matter? The first step is to raise the alias.

# ifconfig eth0:0 inet 192.168.100.100 netmask 255.255.255.255But even here failure is waiting for us - the packet arrives at the real server interface unmodified and therefore stupidly replays the kernel as not intended for the machine. The easiest way to resolve this issue is to raise the VIP to the loopback.

# ifconfig lo:0 inet 192.168.100.100 netmask 255.255.255.255In no case do not raise it on the interface, which looks at the same subnet as the external interface of the director. Otherwise, the external router can cache the mac of the wrong machine and all traffic will go wrong.

Now the bags should run where necessary. By the way, the director himself can be a real server, which we see in our example.

Single point of failure

In this decision, the director himself will be the obvious point of failure causing the destruction of the entire service.

Well, it does not matter, ipvsadm supports launching in daemon mode with the ability to synchronize tables and current connections between several directors. One will obviously become a master, the rest will be slaves.

What remains to us? Move VIP between directors in case of failure. Here HA solutions like heartbeat will help us.

Another task will be monitoring and timely decommissioning of servers from our farm. The easiest way to do this is with weights.

For settling both questions a lot of solutions are written, sharpened for different types of services. I personally liked the Ultramonkey solution most of all, from the LVS authors themselves.

RedHat has a native thing called Piranha, it has its own set of daemons for monitoring the farm and directors, and even some kind of ragged web interface, but it does not support more than 2 directors in the HA bundle. I do not know why.

Ultramonkey

So, Ultramonkey consists of 2 main packages - heartbeat and ldirectord. The first is engaged in providing HA for directors, including raising and moving VIP (and generally speaking can be used not only for directors), and the second maintains the ipvsadm config and monitors the state of the farm.

For heartbeat, you need to draw 3 config. Basic versions are provided with detailed comments, so just give examples.

authkeys

auth 2

#1 crc

2 sha1 mysecretpass

#3 md5 Hello!We configure the authorization of demons on different machines.

haresources

Here we have information about which resource we are creating HA for and what services we pull when moving.

director1.foo.com IPaddr::192.168.100.100/24/eth0 ldirectordThose. raise on interface eth0 192.168.100.100/24 and run ldirectord.

ha.cf

keepalive 1

deadtime 20

udpport 694

udp eth0

node director1.foo.com # <-- uname -n !

node director2.foo.com #We say how to maintain the HA cluster and who actually belongs to it.

Ldirectord has only one config and there, in general, everything is also clear.

checktimeout=10

checkinterval=2

autoreload=yes

logfile="/var/log/ldirectord.log"

# Virtual Service for HTTP

virtual=192.168.100.100:80

real=192.168.100.2:80 gate

real=192.168.100.3:80 gate

service=http

request="alive.html"

receive="I'm alive!"

scheduler=wlc

protocol=tcp

checktype=negotiateThose. ldirectord will jerk every 2 seconds through the http file alive.html and if it doesn’t have the line “I'm alive!” or, worse, the server doesn’t respond, the demon will immediately put it weight 0 and it will be excluded from subsequent shipments.

Weights can also be arranged by yourself, for example, by going over the field with a crown and calculating them depending on the current loadavg, etc. - no one takes away direct access to ipvsadm from you.

Applicability

Although everywhere on the Internet, LVS web server balancing is generally considered as the scope of application, in fact they can balance a great many services. If the protocol is held on one port and has no state, then it can be balanced without any problems. The situation is more complicated with multiport protocols like samba and ftp, but there are also solutions.

By the way, as a real server does not have to be Linux. It can be almost any OS with a TCP / IP stack.

There is also a so-called L7 router, which no longer operates with ports, but with knowledge of high-level protocols. Fellows Japanese are developing Ultramonkey-L7 for this occasion. But now we will not touch him.

What else to read?

- LVS-HOWTO - strictly required to read

- LVS-mini-HOWTO - in short

- Redhat piranha

- Ultra monkey

What balancing solutions do you use?

I will welcome any comments and comments.

Source: https://habr.com/ru/post/104621/

All Articles