IPi Soft Motion Capture

Motion capture (MoCap; Motion Capture) is a technology for recording the movements of actors, which are then used in computer graphics. Since the human body (and animals) is rather complicated, it is much easier, more convincing, and often cheaper to record the movements of the actors and shift them to three-dimensional models than to animate three-dimensional models manually.

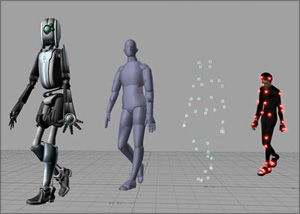

Motion capture (MoCap; Motion Capture) is a technology for recording the movements of actors, which are then used in computer graphics. Since the human body (and animals) is rather complicated, it is much easier, more convincing, and often cheaper to record the movements of the actors and shift them to three-dimensional models than to animate three-dimensional models manually.Most motion capture systems work with markers or sensors that are mounted on the body of an actor , usually with a suit. But there is also a way to capture the movement with the help of chromakey - we talked with Mikhail Nikonov , one of the members of the iPi Soft team (who won the Dubninskaya innovative school ) and discussed the details of the project and its prospects.

Tell us about the project?

Our company makes the technology to create three-dimensional animation of people. We believe that now this is one of the key problems of three-dimensional graphics.

')

Mikhail Nikonov

Why?

Because the rendering and other aspects of three-dimensional animation - even physical modeling - are already developed, and the viewer does not notice any further progress, further efforts in rendering. At the same time, as a person engaged in animation, I can say that in many films and especially in many computer games, the quality of animation still needs to be improved.

Motion animation or facial animation?

Both facial and full body animation.

And what problems do you solve better than traditional image capture, which is made with the help of special video cameras, chroma key, lighting and suits with sensors or markers?

We designed our system on the principle of maximum ease of use for animators. All users with whom we communicate, note that mobility is important for them, flexible filming schedule is important so that you don’t have to order a studio in advance and if you want to redo something so that you don’t have to wait for the studio for a month. First of all, we solve exactly this problem - we provide animators and 3D-graphics studios with a portable system of motion capture, which you can optionally equip each animator with.

What does this look like?

The system consists of a video camera and software that, using image processing and computer vision algorithms, reads skeletal animations from recorded video. The system looks like the software that you put on your computer, and the user must buy the camera in the store. We recommend the Sony PlayStation Eye camera, which costs $ 30 and is sold at any electronics store.

And the markers?

The fact of the matter is that we do not use markers - this is the basis of our decision. That is why we started developing our system. We want to send costumes with markers, costumes with sensors to the warehouse, because they constrain creative freedom.

What about the challenging shooting conditions?

Our system was tested by a well-known Moscow studio, they came with a laptop and with cameras, and we made a seizure in the first room we got. No markers, difficult background, however, our technology copes.

Moving camera?

We can not work with a moving camera, we are not so run-in technology. So far, we have a number of advantages - even with low resolution cameras, at 320x240, we get quite high-quality animation. And so we recommend 640x480.

Perhaps the frame rate is important?

Yes. We recommend the Sony camera precisely because it supports up to 60 frames per second. In addition, we recommend using multiple cameras. We now recommend three or four cameras, and support up to four. On modern computers, you can shoot with six cameras simultaneously, so we are now working on a six-chamber capture.

We will also work on a single-camera version - Microsoft is going to release a three-dimensional camera, and then image capture will be even simpler.

Does the capture occur in real time?

Not yet. The fact is that our algorithm requires a large computing power of the computer - we use all the processor cores in the system, and we use the video card for computing, what is called GPGPU. So even on the most powerful modern computers, we have so far reached a speed of 2 frames per second. But, of course, this is just a matter of time - over the past year we have accelerated our algorithm four times, and the speed of computers has increased about two times, so over the next year we will speed up video processing, and there will be real-time processing not far away.

Your future plans?

Now we are in the beginning. Of course, we will improve this technology; we need several years to develop it in order for it to reach maturity. It's not a secret yet that we are working on related technologies. What you see right now is motion capture for human actors. A person is a complex creature, an actor's game is important, and it is almost impossible to simulate an actor's game on a computer. With animals all the way around - we believe that they need to be modeled on a computer. This is the so-called synthesis of movements. We are now busy creating prototypes of technologies that would allow us to realistically simulate the animation of animals and monsters using genetic algorithms, where you will have a virtual laboratory in which you can breed some creatures and at the same time record the animation of the resulting creatures in different situations - say, animation herds moving over feed, or predator attack.

Not without looking at Spore ?

Maybe, although I must say that Spore was disappointed by the fact that the authors greatly simplified the game, abandoning the original approach, where they wanted to model creatures more honestly and made them more sensible, but without taking into account the factors of biology and even physical laws. There at the same time there can be a creature with a large mass and at the same time a fast one - in life it does not happen this way If you have a large mass - you are a sweeping elephant, and for speed you need to be compact and thin, like a cheetah.

By the way, you can try our image capture technology yourself . Any modern laptop will be suitable for video recording, and for processing we, of course, recommend a powerful desktop computer with Windows OS - most likely, this is a gaming computer with which you can quickly process the animation.

* * *

We were asked to comment on the development of iPi Soft by Anatoly Belikov , already familiar to you in our story with panoramic views of Paris . Here is how the expert comments on the iPi Soft capture system: “there is one“ but ”. The main advantage of this program - work without markers - its main drawback. The fact is that conventional motion capture devices simply track the movement of markers in space. The program does not know what it tracks, and the further use of such data is not automated. The resulting animation has to manually "screw" to a virtual object. This, despite all the difficulties, allows you to track the movement of anything. You can stick markers on your face, on objects in the hands of actors, on a cat, dog, and so on, and programs for optical tracking of markers using household cameras have been around for many years. So it is necessary to understand that the iPi Soft technology is so far limited to capturing the movement of human actors. But, of course, I will definitely use this technology, this is a breakthrough . ”

* * *

ps: new video:

Do not hesitate to join the ranks of Intel's readers on Habré.

Do not hesitate to join the ranks of Intel's readers on Habré.Successes!

Source: https://habr.com/ru/post/103718/

All Articles