SpiNNaker - neural computer

After reading the recently published article “Review of modern projects of large-scale modeling of brain activity,” I would like to talk about another similar project conducted by the research group of the University of Manchester in the UK under the guidance of Professor

Steve Furber, creator of the BBC Microcomputer and 32-bit ARM RISC microprocessor, as well as the founder of ARM.

The university has an outstanding history of computer development and has played a revolutionary role in the development of computer science and artificial intelligence. The world's first electronic computer called the SSEM, also known as “Baby” , the distinguishing characteristic of which was the joint storage of data and programs in the machine’s memory (in other words, von Neumann architecture), was created in 1948 by Frederick Williams and Tom Kilburn. The device itself was created not so much for computational purposes as for studying the properties of computer memory on cathode-ray tubes (aka “Williams tubes” ).

The success of the experiment led to the creation next year of Manchester Mark 1 (Manchester Mark 1), which already had a device for reading and writing punched tapes, which allows for input / output from the magnetic drum without directly stopping the program. Also in Mark 1, index registers were used for the first time in the world. Two years later, Ferranti Mark 1, the world's first commercial universal computer, was developed there. These computers have become the progenitors of almost all modern computers.

Alan Turing, one of the founders of computing technology and artificial intelligence, was directly involved in the experiments with “Baby” and Mark 1. Turing believed that computers would eventually be able to think like a person. He published the results of his research in the article “Computing Machines and Mind”, in which a mental experiment was proposed (which became known as the Turing test), which consists in assessing the ability of a machine to think: whether a person is able to talk with an invisible interlocutor, he is with another person or artificial device.

')

The Turing test and the thinking modeling approach proposed in it has not yet been solved and remains the subject of sharp scientific debate. The main disadvantages of modern computers, which distinguish them from the brain of living beings, are their inability to learn and instability to hardware failures when the entire system breaks down when one component fails. In addition, modern computers of the von Neumann architecture do not have a number of capabilities that are easily performed by the human brain, such as associative memory and the ability to solve problems of object classification and recognition, clustering or prediction.

Another reason for the popularity of such projects is the approach to the physical limit of increasing the power of microprocessors. Industry giants recognize that a further increase in the number of transistors will not lead to a significant increase in processor speed, and logic prompts an increase in the number of processors in the chips, in other words, apply the path of mass parallelism.

The dilemma is whether it is necessary to increase the power of microprocessors to the extreme and only then install as many of these processors as possible into the final product, or should simplified processors be used that can perform elementary mathematical operations. If it is possible to divide the task into an arbitrary number of largely independent subtasks, the system that builds according to the last principle wins.

The motifs described above are driven by a research team working on a project called Spiking Neural Network Architecture (SpiNNaker). The goal of the project is to create a device with a sufficiently high fault tolerance, which is achieved by dividing the computing power into an n-th number of parts that perform the simplest tasks. Moreover, if any of these parts fail, the system continues to function correctly only by reconfiguring itself so as to eliminate the unreliable node, redistributing its responsibilities to neighboring nodes and finding alternative “synaptic” connections for signal transmission. Something similar happens in the human brain, because approximately every second a person loses a neuron, but this has little effect on his ability to think.

Another advantage of the system being created is its ability for self-learning, a distinctive feature of neural networks. Thus, such a machine will help scientists learn about the processes occurring in the cerebral cortex and improve understanding of the complex interaction of brain cells.

At the first stage of the project, it is planned to simulate in real time up to 500,000 neurons, which roughly corresponds to the number of neurons in the bee's brain. At the final stage of the project, it is assumed that the device will be able to model up to a billion neurons, which is approximately equal to the number of neurons in the human brain.

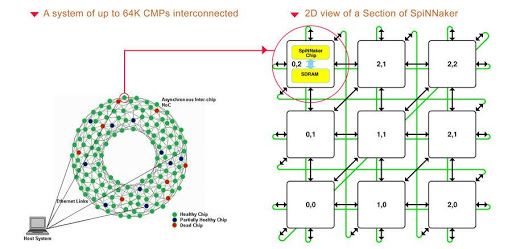

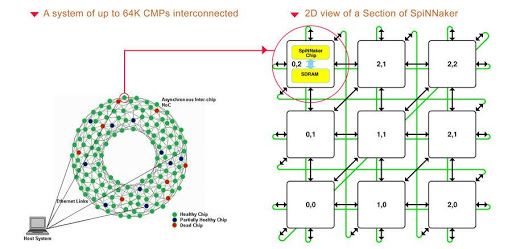

Remarkable is the approach to the design of the computer. The proposed device consists of a regular matrix of 50 chips. Each chip has 20 ARM968 microprocessors, and 19 of them are allocated directly to the simulation of neurons, and the remaining one controls the operation of the chip and keeps an activity log.

Fig. 1. System diagram

Each such chip is a complete subsystem with its own router, internal synchronous dynamic RAM with direct access mode (32 KB for storing commands and 64 KB for storing data) and its own messaging system with a throughput of 8 Gbit per second. In addition, each chip has 1 GB of external memory for storing the network topology. According to the developers, the ratio of the central processor, internal memory and data transmission devices allows to model up to 1,000 neurons in each microprocessor in real time.

A distinctive feature of this system is the complete lack of synchronization. Each neuron, upon reaching a certain internal state, sends a signal to the postsynaptic neuron, which, accordingly, sends or does not send (depending on its internal state) a new signal to the subsequent neuron.

Noteworthy is the possibility of reconfiguring the neural network during the work cycle. Thus, it allows you to isolate faulty nodes, as well as creating the possibility of forming new connections between neurons and probably even allowing new neurons to appear, as necessary.

Prior to the immediate start of the simulation itself, the configuration file is loaded into the system, determining the location of the neurons and the initial network data. This method of loading data requires the simplest operating system on each chip. In working condition, the machine requires a constant supply of information at the entrance, and the result is fed to the output device.

Fig. 2. Test chip

There is a need for a system to monitor the processes occurring inside the machine itself for support, error correction and manual system reconfiguration. For this purpose, it is possible to manually stop the machine while preserving the current state of the network and after (as necessary) corresponding changes in the download of already new configurations.

Despite the fact that the primary purpose of the machine is aimed at modeling neural networks, it is equally possible to use it in other diverse applications that require large computing power, such as protein folding, decryption or search in databases.

Steve Furber, creator of the BBC Microcomputer and 32-bit ARM RISC microprocessor, as well as the founder of ARM.

An excursion into the history of research at the University of Manchester

The university has an outstanding history of computer development and has played a revolutionary role in the development of computer science and artificial intelligence. The world's first electronic computer called the SSEM, also known as “Baby” , the distinguishing characteristic of which was the joint storage of data and programs in the machine’s memory (in other words, von Neumann architecture), was created in 1948 by Frederick Williams and Tom Kilburn. The device itself was created not so much for computational purposes as for studying the properties of computer memory on cathode-ray tubes (aka “Williams tubes” ).

The success of the experiment led to the creation next year of Manchester Mark 1 (Manchester Mark 1), which already had a device for reading and writing punched tapes, which allows for input / output from the magnetic drum without directly stopping the program. Also in Mark 1, index registers were used for the first time in the world. Two years later, Ferranti Mark 1, the world's first commercial universal computer, was developed there. These computers have become the progenitors of almost all modern computers.

Alan Turing, one of the founders of computing technology and artificial intelligence, was directly involved in the experiments with “Baby” and Mark 1. Turing believed that computers would eventually be able to think like a person. He published the results of his research in the article “Computing Machines and Mind”, in which a mental experiment was proposed (which became known as the Turing test), which consists in assessing the ability of a machine to think: whether a person is able to talk with an invisible interlocutor, he is with another person or artificial device.

')

Motives of the project

The Turing test and the thinking modeling approach proposed in it has not yet been solved and remains the subject of sharp scientific debate. The main disadvantages of modern computers, which distinguish them from the brain of living beings, are their inability to learn and instability to hardware failures when the entire system breaks down when one component fails. In addition, modern computers of the von Neumann architecture do not have a number of capabilities that are easily performed by the human brain, such as associative memory and the ability to solve problems of object classification and recognition, clustering or prediction.

Another reason for the popularity of such projects is the approach to the physical limit of increasing the power of microprocessors. Industry giants recognize that a further increase in the number of transistors will not lead to a significant increase in processor speed, and logic prompts an increase in the number of processors in the chips, in other words, apply the path of mass parallelism.

The dilemma is whether it is necessary to increase the power of microprocessors to the extreme and only then install as many of these processors as possible into the final product, or should simplified processors be used that can perform elementary mathematical operations. If it is possible to divide the task into an arbitrary number of largely independent subtasks, the system that builds according to the last principle wins.

Objectives of the SpiNNaker project

The motifs described above are driven by a research team working on a project called Spiking Neural Network Architecture (SpiNNaker). The goal of the project is to create a device with a sufficiently high fault tolerance, which is achieved by dividing the computing power into an n-th number of parts that perform the simplest tasks. Moreover, if any of these parts fail, the system continues to function correctly only by reconfiguring itself so as to eliminate the unreliable node, redistributing its responsibilities to neighboring nodes and finding alternative “synaptic” connections for signal transmission. Something similar happens in the human brain, because approximately every second a person loses a neuron, but this has little effect on his ability to think.

Another advantage of the system being created is its ability for self-learning, a distinctive feature of neural networks. Thus, such a machine will help scientists learn about the processes occurring in the cerebral cortex and improve understanding of the complex interaction of brain cells.

At the first stage of the project, it is planned to simulate in real time up to 500,000 neurons, which roughly corresponds to the number of neurons in the bee's brain. At the final stage of the project, it is assumed that the device will be able to model up to a billion neurons, which is approximately equal to the number of neurons in the human brain.

System architecture

Remarkable is the approach to the design of the computer. The proposed device consists of a regular matrix of 50 chips. Each chip has 20 ARM968 microprocessors, and 19 of them are allocated directly to the simulation of neurons, and the remaining one controls the operation of the chip and keeps an activity log.

Fig. 1. System diagram

Each such chip is a complete subsystem with its own router, internal synchronous dynamic RAM with direct access mode (32 KB for storing commands and 64 KB for storing data) and its own messaging system with a throughput of 8 Gbit per second. In addition, each chip has 1 GB of external memory for storing the network topology. According to the developers, the ratio of the central processor, internal memory and data transmission devices allows to model up to 1,000 neurons in each microprocessor in real time.

A distinctive feature of this system is the complete lack of synchronization. Each neuron, upon reaching a certain internal state, sends a signal to the postsynaptic neuron, which, accordingly, sends or does not send (depending on its internal state) a new signal to the subsequent neuron.

Noteworthy is the possibility of reconfiguring the neural network during the work cycle. Thus, it allows you to isolate faulty nodes, as well as creating the possibility of forming new connections between neurons and probably even allowing new neurons to appear, as necessary.

Prior to the immediate start of the simulation itself, the configuration file is loaded into the system, determining the location of the neurons and the initial network data. This method of loading data requires the simplest operating system on each chip. In working condition, the machine requires a constant supply of information at the entrance, and the result is fed to the output device.

Fig. 2. Test chip

There is a need for a system to monitor the processes occurring inside the machine itself for support, error correction and manual system reconfiguration. For this purpose, it is possible to manually stop the machine while preserving the current state of the network and after (as necessary) corresponding changes in the download of already new configurations.

Perspectives

Despite the fact that the primary purpose of the machine is aimed at modeling neural networks, it is equally possible to use it in other diverse applications that require large computing power, such as protein folding, decryption or search in databases.

Source: https://habr.com/ru/post/102400/

All Articles