FCoE: The Future of Fiber Channel

The noise around plans for Fiber Channel over Ethernet (FCoE), the announcement of its support by almost every manufacturer in our industry, everything looks as if this transport intends to eventually completely force out the existing Fiber Channel networks. The standardization efforts ended in ratification in 2009, so many manufacturers, including (and among the first) NetApp, and our long-time partner Cisco, are actively bringing products using FCoE to the market.

If you are using Fiber Channel technology today, you should understand and prepare for the arrival of this new technology. In this article, I will try to answer some important questions that may arise about FCoE technology, such as:

- What is FCoE?

- Why choose FCoE?

- What are the capabilities of fcoe?

- What should we prepare for in the future?

What is FCoE?

Fiber Channel over Ethernet , or FCoE , is a new protocol (transport) defined by the standard in the T11 committee. (T11 is a committee of the International Committee for Information Technology Standards — INCITS — responsible for Fiber Channel.) FCoE transfers Fiber Channel frames over Ethernet, encapsulating Fiber Channel frames into Ethernet jumbo frames. The standard was fully ratified in 2009.

Why FCoE?

The prerequisites behind the creation of FCoE were in the idea of consolidating I / O, which would allow it to safely coexist different types of traffic in a single “wire”, which will reduce the range and simplify cabling, reduce the number of adapters required per host and reduce power consumption.

')

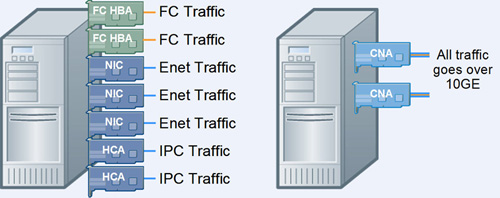

Figure 1) Reducing complexity using FCoE.

The strength that FCoE drives forward is the need to reduce total cost of ownership (TCO), while maintaining the existing infrastructure and backward compatibility, as well as the usual procedures and processes. Using the convergence of Fiber Channel and Ethernet, and eliminating the need to use different network technologies, FCoE promises a significant reduction in the complexity of the network structure, and given the rapid decline in the cost of 10Gb Ethernet infrastructure elements, it also reduces the cost.

Initially, most FCoE implementations were done at the “level” of host systems and switches, while storage systems continued to use native Fiber Channel instead of FCoE. This helps to preserve large infrastructure investments that have been made in FC over the years.

The big advantage of FCoE is that it provides smooth migration from FC, as an interface, to Ethernet (while keeping FC as a protocol). You can expand or replace part of your FC with Ethernet switches, allowing you to make the transition from one network technology (FC) to another (Ethernet) as it becomes necessary.

In the long run, if FCoE is successful, you can choose when upgrading your infrastructure, or building a new data center, a storage system natively supporting FCoE. NetApp announced native support for its protocol and target HBA FCoE systems and in parallel will continue to support Fiber Channel on all of its systems.

And recently, NetApp and Cisco announced the completion of the certification process of the industry's first “fully FCoE” solution, “from host to storage,” for server virtualization systems running VMware vSphere.

FCoE implementation

There are two possible ways to deploy FCoE in your IT system:

- Use a hardware initiator with a converged network adapter (CNA), and a hardware target, which is similar to the existing Fiber Channel usage model.

Figure 2) CNA supports both FC and Ethernet on a single device, reducing the number of network devices required.

CNA manufacturers are usually companies familiar to us from FC HBA, such as Qlogic, Emulex, and Brocade, but most likely, we should expect the appearance of traditional Ethernet NIC manufacturers, such as Intel and Broadcom, among them. Both are actively involved in the T11 working group (FC-BB-5), which develops the FCoE standards.

- Use software initiator and target with the usual 10 Gigabit Ethernet (10GbE) NIC.

In December 2007, Intel released a software initiator to help develop FCoE solutions for Linux. It is expected that various Linux distributions will come with software FCoE initiator . The idea that Linux distributions will ship FCoE ready is similar to how all OSs today are iSCSI ready.

I think that such a software solution will be fast enough for a relatively low price compared to a purely hardware solution. Since in practice we are buying the server “on the rise”, with a certain performance margin, based on a certain growth of our tasks and applications, we usually always have on them some CPU power. The iSCSI market confirms this theory, including in virtual infrastructures.

Figure 3) Stack of the software initiator FCoE.

What is saved?

For those who are already using Fiber Channel, when using FCoE, the need to configure the zoning and mapping of LUNs will remain, as well as the usual tasks in the factory, such as the registered state change notification (RSCN) and link state path selection (FSPF). This means that the migration to FCoE will be relatively simple and familiar. Any changes are painful, but the transition to the new protocol, when it can use the established procedures, processes and know-how, makes such a transition to Ethernet easier, and will be a great advantage of FCoE.

How is FCoE different from iSCSI?

FCoE does not use TCP / IP, which uses iSCSI, and therefore has a number of differences, such as:

- Using the "pause frame"

- Using "Pause with Priority"

- No TCP retries (timeouts)

- Lack of IP routing capability

- Lack of “broadcast storms” (not used by ARP)

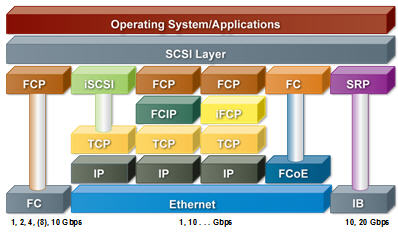

Figure 4) Comparison of various block protocols.

Since FCoE does not fully use the IP layer, this means that FCoE is not routable. However, this does not mean that it cannot be routed at all. FCoE routing can be performed, if necessary, with punctures such as FCIP.

The iSCSI protocol can be used on a network with packet loss, and not necessarily requiring 10GbE. FCoE needs exactly 10GbE, and a network without packet loss, with infrastructure components that correctly process pause frame and per priority pause flow control (PFC) requests based on different traffic classes corresponding to different priorities. The idea behind the PFC is to, at times of high channel utilization, provide high-priority traffic with an advantage in transmission, while low-priority traffic will be delayed in favor of high priority using a pause frame.

10GbE switches will also require support for Data Center Ethernet (DCE), Ethernet extensions that include classes of service, better flow control (congestion control), and improved management capabilities. FCoE also requires Jumbo Frame support, since the FC packet is 2112 bytes in size and cannot be divided during transmission; iSCSI does not require the use of Jumbo Frames.

Choosing between FCoE and iSCSI

More stringent infrastructure requirements specific to FCoE, compared to iSCSI, can influence which protocol you choose. In some cases, the choice of protocol is determined by which one is supported by the software manufacturer.

In addition, you might prefer iSCSI in case your goals are:

- Low cost

- Ease of use

iSCSI will work on your current infrastructure, with minimal changes. Network infrastructure requirements for FCoE can mean that you will need devices like converged network adapter (CNA) and new switches that support DCE. (In the future, you may be able to use existing NICs in combination with a software initiator, the way it works today for iSCSI.)

Since iSCSI runs on top of TCP / IP, managing such a network will be more familiar, installing and managing it easier. FCoE does not use TCP / IP. Its administration is more similar to the administration of traditional FC SAN, which can be quite difficult if you are unfamiliar with the administration of FC SAN.

In other words, you might prefer FCoE if you already have significant experience with Fiber Channel SANs (FC SANs), especially if your requirements include:

- Support mission-critical applications

- High data availability

- Highest possible performance

This does not mean that iSCSI does not meet these requirements. However, the Fiber Channel has already proven itself for a long time in such systems; FCoE offers the same feature set and is fully compatible with existing FC SANs. It simply replaces the physical layer of the Fiber Channel with 10GbE.

The performance advantages of FCoE over iSCSI still require confirmation. Both use 10GbE, but TCP / IP can increase latency for iSCSI, perhaps giving FCoE a slight advantage in a similar environment.

These principles are compatible with current practices for both iSCSI and FC SAN. Until now, the most profitable application for iSCSI has been the consolidation of Windows storage environments, mainly on 1GbE. Using iSCSI is usually for auxiliary and backup data centers in large organizations, in the main data centers of smaller companies, and in remote offices.

Fiber Channel systems dominate large data centers, large organizations, and, as a rule, are used for mission-critical applications on UNIX and Windows systems. Examples of a common usage field are data warehousing, data mining, enterprise resource planning, and OLTP data warehouses.

What will happen to the Fiber Channel?

With all the fuss around FCoE, what happens to the Fiber Channel? Will there be a transition to 16Gb FC technology, or will only FCoE be steered now? Will Ethernet continue its further development (40GbE and 100GbE)? As can be seen in the current roadmap, 16Gb FC is scheduled for 2011. Fresh FCIA press releases claim strong support for 16Gb FC development along with support for FCoE. I think that 16Gb FC will certainly appear, but the big question is how quickly it will be accepted by the market, relative to FCoE. Today, the already existing 8Gb FC for several years is clearly not universally replacing 4Gb FC. Many, both equipment manufacturers and customers with large FC networks, are already actively reorienting themselves to FCoE today, as to a more promising and economically viable solution in the future.

What do you need to do?

What you need to do depends on your situation. If you have invested a lot in Fiber Channel, and you do not need to be updated in the next few years, then it may be better not to do anything. If you have planned an update in the next year or two, then pay serious attention to FCoE. Apparently, the current manufacturers of FC switches intend to transfer their users to Ethernet, and, perhaps, stop creating their own FC switches.

Technologies can solve many problems, but the issues of interaction between groups in large organizations are clearly not where they will help. One of the problems that, for example, faced when implementing iSCSI in large companies, was the conflict of areas of responsibility between groups of network administrators and network and storage administrators. In the traditional infrastructure of FC, the guys from the group of storage network administrators are fully responsible for FC-fabric and own all the rights to it, in the case of iSCSI it is managed by the group of network administrators of the company. If FCoE succeeds, the groups will have to get closer, they will have to work closer to each other than ever, and this, paradoxically, may be the biggest problem standing in FCoE’s way in the IT infrastructure of companies.

findings

Although FCoE creates certain difficulties in deciding where and how you can apply it, its long-term perspectives and advantages are clear. By consolidating your networks on a single Ethernet fabric, you can dramatically reduce both capital costs and administration costs, without sacrificing the choice of protocol that best suits the needs of the applications.

Regardless of whether you choose iSCSI, FCoE, or a combination of them, NetApp storage systems support all of these storage protocols simultaneously, on the same storage system. Choosing NetApp storage systems can provide security and investment protection in the need for further development of the IT infrastructure.

Source: https://habr.com/ru/post/102059/

All Articles